- Infos im HLRS Wiki sind nicht rechtsverbindlich und ohne Gewähr -

- Information contained in the HLRS Wiki is not legally binding and HLRS is not responsible for any damages that might result from its use -

CRAY XE6 Hardware and Architecture: Difference between revisions

From HLRS Platforms

Jump to navigationJump to search

No edit summary |

m (changed 8 cores -> 16) |

||

| Line 5: | Line 5: | ||

*** 512KB L2 Cache, 12MB L3 Cache | *** 512KB L2 Cache, 12MB L3 Cache | ||

*** HyperTransport HT3 | *** HyperTransport HT3 | ||

*** | *** 16 cores | ||

** 32GB memory | ** 32GB memory | ||

* 12 '''service nodes''' | * 12 '''service nodes''' | ||

Revision as of 17:48, 12 January 2011

Hardware of Installation step 0

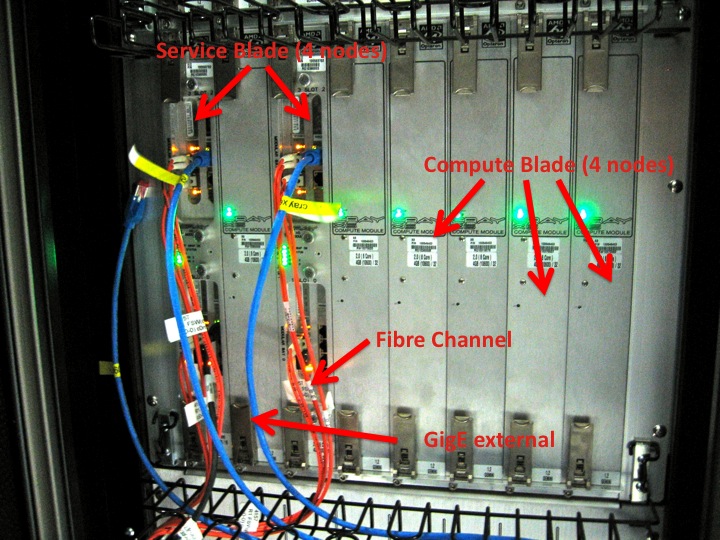

- 84 compute nodes / 1344 cores

- dual socket G34

- Magny-Cours @ 2 GHz (Opteron(tm) Processor 6128)

- 512KB L2 Cache, 12MB L3 Cache

- HyperTransport HT3

- 16 cores

- 32GB memory

- dual socket G34

- 12 service nodes

- 6-Core AMD Opteron(tm) Processor 23 (D0)

- 2.2GHz

- 16GB memory

- 6-Core AMD Opteron(tm) Processor 23 (D0)

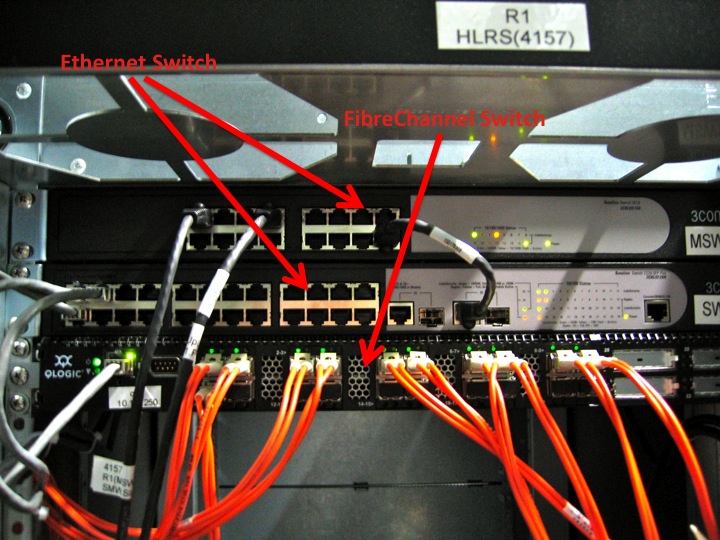

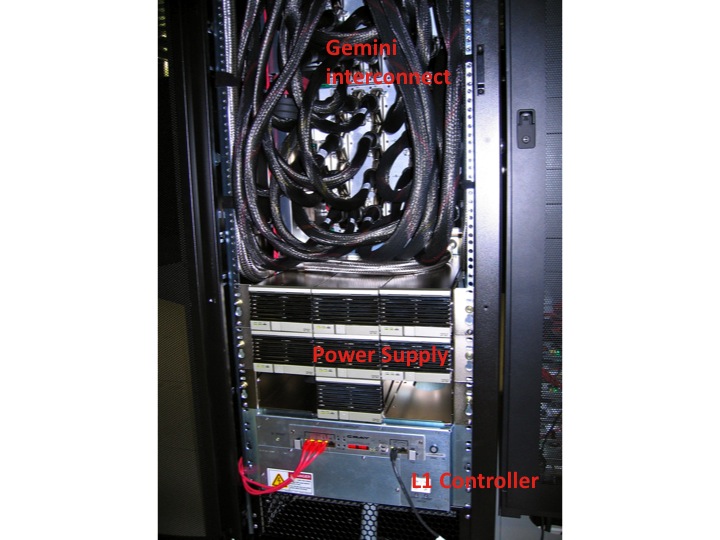

- High Speed Network CRAY Gemini

- LSI FibreChannel RAID System (SATA)

- Parallel Filesystem

- Lustre version 1.8.2

- capacity 11 TB

- 3 OST's, 1 MDS, Network Gemini

- Parallel Filesystem

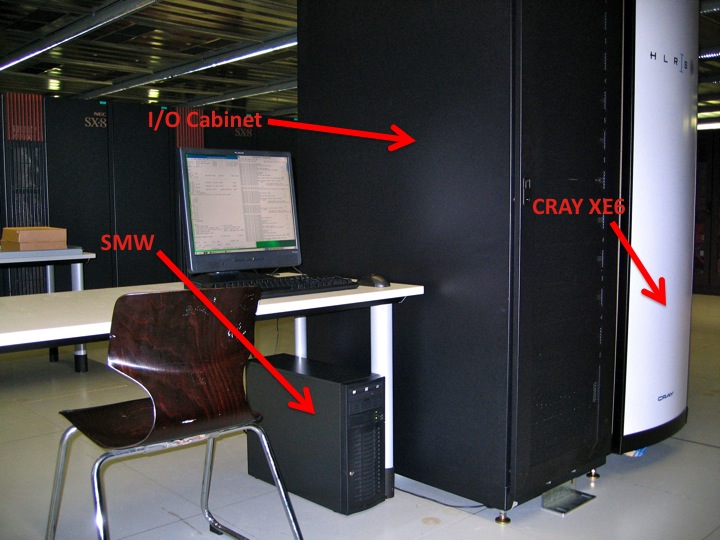

- System Management Workstation (SMW)

Architecture

- System Management Workstation (SMW)

- system administrator's console for managing a Cray system like monitoring, installing/upgrading software, controls the hardware, starting and stopping the XE6 system.

- service nodes are classified in:

- login nodes (xe601.hww.de) for users to access the system

- boot nodes which provides the OS for all other nodes, licenses,...

- network nodes which provides e.g. external network connections for the compute nodes

- Cray Data Virtualization Service (DVS): is an I/O forwarding service that can parallelize the I/O transactions of an underlying POSIX-compliant file system.

- sdb node for services like ALPS, torque, moab, cray management services,...

- I/O nodes for e.g. lustre

- MOM (torque) nodes for placing user jobs of the batch system in to execution

- compute nodes

- are only available for user using the batch system and the Application Level Placement Scheduler (ALPS), see running applications.

Features

- Cray Linux Environment (CLE) 3.1 operating system

- Operating System is based on SUSE Linux Enterprise Server (SLES) 11

- Cray Gemini interconnection network

- Cluster Compatibility Mode (CCM) functionality enables cluster-based independent software vendor (ISV) applications to run without modification on Cray systems.

- Batch System: torque, moab

- many development tools available:

- Compiler: Cray, PGI, GNU,

- Debugging: DDT, ATP,...

- Performance Analysis: CrayPat, Cray Apprentice, PAPI

- Libraries: BLAS, LAPACK, FFTW, PETSc, MPT,....