- Infos im HLRS Wiki sind nicht rechtsverbindlich und ohne Gewähr -

- Information contained in the HLRS Wiki is not legally binding and HLRS is not responsible for any damages that might result from its use -

Graphic Environment: Difference between revisions

(→Linux) |

|||

| (23 intermediate revisions by 7 users not shown) | |||

| Line 1: | Line 1: | ||

For graphical pre- and post-processing purposes | For graphical pre- and post-processing purposes some visualisation nodes have been integrated into the Vulcan cluster. | ||

<pre> | <pre> | ||

user@ | user@cl5fr2>qsub -I -l select=1:node_type=visamd -q vis | ||

</pre> | </pre> | ||

| Line 8: | Line 8: | ||

== VNC Setup == | == VNC Setup == | ||

If you want to use the | If you want to use the vulcan Graphic Hardware e.g. for graphical pre- and post-processing, on way to do it is via a vnc-connection. For that purpose you have to download/install a VNC viewer to/on your local machine. | ||

E.g. TurboVNC comes as a pre-compiled package which can be downloaded from [ | E.g. TurboVNC comes as a pre-compiled package which can be downloaded from [https://sourceforge.net/projects/turbovnc/ https://sourceforge.net/projects/turbovnc/]. For Fedora Linux from version 13 onwards the package tigervnc is available via the package manager. | ||

Other VNC viewers can also be used but we recommend the usage of TurboVNC, an accelerated version of TightVNC | Other VNC viewers can also be used but we recommend the usage of TurboVNC, an accelerated version of TightVNC | ||

designed for video and 3D applications, since we run the TurboVNC server on the visualisation nodes and the combination of the two components will give the best performance for GL applications. | designed for video and 3D applications, since we run the TurboVNC server on the visualisation nodes and the combination of the two components will give the best performance for GL applications. | ||

==== Preparation of | ==== Preparation of vulcan Cluster ==== | ||

Before using vnc for the first time you have to log on to the | Before using vnc for the first time you have to log on to the Vulcan frontend and create the vnc settings directory | ||

<pre> | |||

cd $HOME | |||

mkdir .vnc | |||

</pre> | |||

With the command | |||

<pre>vncpasswd</pre> | <pre>vncpasswd</pre> | ||

the vnc password file vncpasswd is created within the $HOME/.vnc directory which is required for a correct server startup. | |||

==== Starting the TurboVNC server ==== | ==== Starting the TurboVNC server ==== | ||

| Line 25: | Line 30: | ||

<pre> | <pre> | ||

user@client>ssh | user@client>ssh cl1fr3.hww.hlrs.de | ||

</pre> | |||

Then load the VirtualGL module to setup the VNC environment and launch the VNC startscript. | |||

<pre> | |||

user@eslogin001>module load VirtualGL | |||

user@eslogin001>vis_via_vnc.sh | |||

</pre> | </pre> | ||

This will set up a default VNC session with one hour walltime running a TurboVNC server with a resolution of 1240x900. | |||

To control the configuration of the VNC session the vis_via_vnc.sh script has several '''optional''' parameters. The most relevant two are: | |||

<pre> | <pre> | ||

vis_via_vnc.sh [walltime] [geometry] | |||

</pre> | </pre> | ||

where walltime can be specified in format hh:mm:ss or only hh. | |||

The geometry option sets the resolution of the Xvnc server launched by the TurboVNC session and has to be given in format 1234x1234. | |||

There is also a -help option which returns the explanation of the parameters and gives examples for script usage. | |||

The vis_via_vnc.sh script returns the name of the node reserved for you, a display number and the IP-address. | The vis_via_vnc.sh script returns the name of the node reserved for you, a display number and the IP-address. | ||

| Line 41: | Line 58: | ||

like stated below | like stated below | ||

<pre> | <pre> | ||

user@client>vncviewer -via | user@client>vncviewer -via cl1fr3.hww.hlrs.de <node name>:<Display#> | ||

</pre> | </pre> | ||

| Line 53: | Line 70: | ||

Enter the vnc password you set on the frontend and you should get a Gnome session running in your VNC viewer. | Enter the vnc password you set on the frontend and you should get a Gnome session running in your VNC viewer. | ||

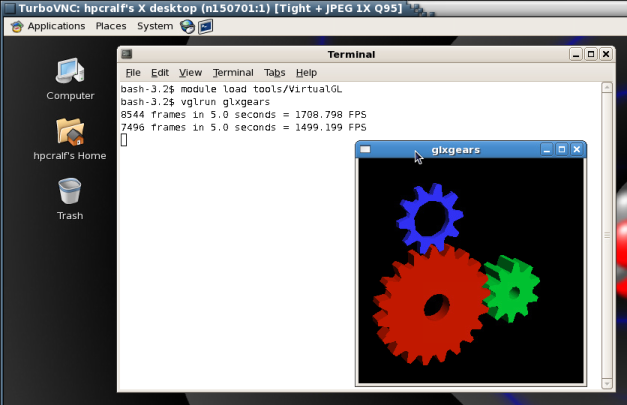

==== GL applications within the VNC session ==== | ==== GL applications within the VNC session with TurboVNC ==== | ||

To execute 64Bit GL Applications within this session you have to open a shell and again load the VirtualGL module to set the VirtualGL environment and then start the application with the VirtualGL wrapper command | To execute 64Bit GL Applications within this session you have to open a shell and again load the VirtualGL module to set the VirtualGL environment and then start the application with the VirtualGL wrapper command | ||

<pre> | <pre> | ||

| Line 60: | Line 77: | ||

[[Image:vgl_in_vnc_session.png]] | [[Image:vgl_in_vnc_session.png]] | ||

==== GL applications within the VNC session with X0-VNC ==== | |||

Since the X0-VNC server is directly reading the frame buffer of the visualisation node's graphic card, GL applications can be directly executed within the VNC session without using any wrapping command. | |||

==== Ending the vnc session ==== | ==== Ending the vnc session ==== | ||

| Line 66: | Line 86: | ||

<pre> | <pre> | ||

user@ | user@cl1fr3>qdel [Job ID] | ||

</pre> | |||

==== Usage under Windows ==== | |||

VNC viewers for Windows often do not support the '-via' option to tunnel via SSH. In this manual tunneling is required, which can be done using the PLink tool which comes with the [[http://www.chiark.greenend.org.uk/~sgtatham/putty/download.html|Putty] distribution. | |||

Open a command prompt window and run PLink with the options | |||

<pre> | |||

plink.exe -N -L 10000:<IP-address of vis-node>:5901 | |||

</pre> | </pre> | ||

The you can need to start your VNC client and connect to the address localhost:10000. | |||

== VirtualGL Setup (WITHOUT turbovnc) == | == VirtualGL Setup (WITHOUT turbovnc) == | ||

| Line 74: | Line 104: | ||

==== Linux ==== | ==== Linux ==== | ||

After the installation of VirtualGL you can connect to cl3fr1.hww.de via the vglconnect command | After the installation of VirtualGL you can connect to cl3fr1.hww.de via the vglconnect command. Please be aware that vglconnect uses the nettest binary on the remote side to determine a free port for the VGL connection. Since on the hww machines, VGL is installed in a non standard path one has either to use the -bindir option or set the environment variable VGL_BINDIR on the client side to the correct directory. I.e. to connect to the vulcan front-ends please use the following commands: | ||

<pre> | <pre> | ||

user@client>vglconnect -s | user@client>export VGL_BINDIR=/opt/hlrs/non-spack/2022-02/vis/VirtualGL/2.6.5/bin | ||

user@client>vglconnect -s cl1fr3.hww.hlrs.de | |||

</pre> | </pre> | ||

To prepare the visualisation queue job use the vis_via_vnc.sh script that is made available via the VirtualGL module. | |||

<pre> | <pre> | ||

user@cl3fr1>module load | user@cl3fr1>module load VirtualGL | ||

user@cl3fr1> | user@cl3fr1>vis_via_vnc.sh XX:XX:XX | ||

</pre> | </pre> | ||

where XX:XX:XX is the walltime required for your visualisation job. | where XX:XX:XX is the walltime required for your visualisation job. | ||

Once the job is running and the node is set up, the job-id as well as the node name are printed in front of the instructions on how to connect via vnc. To be able to connect to the reserved visualization node directly it is necessary to export the environment variable PBS_JOBID to the job-id of the corresponding queue job. I.e.: | |||

<pre> | |||

export PBS_JOBID=<job.no>.cl1intern | |||

user@cl3fr1>vglconnect -s n<node.no> | |||

</pre> | |||

On the visualisation node you can then execute 64Bit GL applications with the VirtualGL wrapper command | On the visualisation node you can then execute 64Bit GL applications with the VirtualGL wrapper command | ||

<pre> | <pre> | ||

user@vis-node>module load | user@vis-node>module load VirtualGL | ||

user@vis-node>vglrun <Application command> | user@vis-node>vglrun <Application command> | ||

</pre> | </pre> | ||

Latest revision as of 18:32, 13 August 2024

For graphical pre- and post-processing purposes some visualisation nodes have been integrated into the Vulcan cluster.

user@cl5fr2>qsub -I -l select=1:node_type=visamd -q vis

To use the graphic hardware for remote rendering there are currently two ways tested. The first is via TurboVNC the second one is directly via VirtualGL.

VNC Setup

If you want to use the vulcan Graphic Hardware e.g. for graphical pre- and post-processing, on way to do it is via a vnc-connection. For that purpose you have to download/install a VNC viewer to/on your local machine.

E.g. TurboVNC comes as a pre-compiled package which can be downloaded from https://sourceforge.net/projects/turbovnc/. For Fedora Linux from version 13 onwards the package tigervnc is available via the package manager.

Other VNC viewers can also be used but we recommend the usage of TurboVNC, an accelerated version of TightVNC designed for video and 3D applications, since we run the TurboVNC server on the visualisation nodes and the combination of the two components will give the best performance for GL applications.

Preparation of vulcan Cluster

Before using vnc for the first time you have to log on to the Vulcan frontend and create the vnc settings directory

cd $HOME mkdir .vnc

With the command

vncpasswd

the vnc password file vncpasswd is created within the $HOME/.vnc directory which is required for a correct server startup.

Starting the TurboVNC server

To start a TurboVNC server you simply have to login to the cluster frontend cl3fr1.hww.de via ssh.

user@client>ssh cl1fr3.hww.hlrs.de

Then load the VirtualGL module to setup the VNC environment and launch the VNC startscript.

user@eslogin001>module load VirtualGL user@eslogin001>vis_via_vnc.sh

This will set up a default VNC session with one hour walltime running a TurboVNC server with a resolution of 1240x900. To control the configuration of the VNC session the vis_via_vnc.sh script has several optional parameters. The most relevant two are:

vis_via_vnc.sh [walltime] [geometry]

where walltime can be specified in format hh:mm:ss or only hh.

The geometry option sets the resolution of the Xvnc server launched by the TurboVNC session and has to be given in format 1234x1234.

There is also a -help option which returns the explanation of the parameters and gives examples for script usage.

The vis_via_vnc.sh script returns the name of the node reserved for you, a display number and the IP-address.

VNC viewer with -via option

If you got a VNC viewer which supports the via option like TurboVNC or TigerVNC you can simply call the viewer like stated below

user@client>vncviewer -via cl1fr3.hww.hlrs.de <node name>:<Display#>

VNC viewer without -via option

If you got a VNC viewer without support of the vis option, like JavaVNC or TightVNC for Windows, you have to setup a ssh tunnel via the frontend first and then launch the VNC viewer with a connection to localhost

user@client>ssh -N -L10000:<IP-address of vis-node>:5901 & user@client>vncviewer localhost:10000

Enter the vnc password you set on the frontend and you should get a Gnome session running in your VNC viewer.

GL applications within the VNC session with TurboVNC

To execute 64Bit GL Applications within this session you have to open a shell and again load the VirtualGL module to set the VirtualGL environment and then start the application with the VirtualGL wrapper command

vglrun <Application command>

GL applications within the VNC session with X0-VNC

Since the X0-VNC server is directly reading the frame buffer of the visualisation node's graphic card, GL applications can be directly executed within the VNC session without using any wrapping command.

Ending the vnc session

If the vnc session isn't needed any more and the requested walltime isn't expired already you should kill your queue job with

user@cl1fr3>qdel [Job ID]

Usage under Windows

VNC viewers for Windows often do not support the '-via' option to tunnel via SSH. In this manual tunneling is required, which can be done using the PLink tool which comes with the [[1] distribution.

Open a command prompt window and run PLink with the options

plink.exe -N -L 10000:<IP-address of vis-node>:5901

The you can need to start your VNC client and connect to the address localhost:10000.

VirtualGL Setup (WITHOUT turbovnc)

To use VirtualGL you have to install it on your local client. It is available in the form of pre-compiled packages at http://www.virtualgl.org/Downloads/VirtualGL.

Linux

After the installation of VirtualGL you can connect to cl3fr1.hww.de via the vglconnect command. Please be aware that vglconnect uses the nettest binary on the remote side to determine a free port for the VGL connection. Since on the hww machines, VGL is installed in a non standard path one has either to use the -bindir option or set the environment variable VGL_BINDIR on the client side to the correct directory. I.e. to connect to the vulcan front-ends please use the following commands:

user@client>export VGL_BINDIR=/opt/hlrs/non-spack/2022-02/vis/VirtualGL/2.6.5/bin user@client>vglconnect -s cl1fr3.hww.hlrs.de

To prepare the visualisation queue job use the vis_via_vnc.sh script that is made available via the VirtualGL module.

user@cl3fr1>module load VirtualGL user@cl3fr1>vis_via_vnc.sh XX:XX:XX

where XX:XX:XX is the walltime required for your visualisation job.

Once the job is running and the node is set up, the job-id as well as the node name are printed in front of the instructions on how to connect via vnc. To be able to connect to the reserved visualization node directly it is necessary to export the environment variable PBS_JOBID to the job-id of the corresponding queue job. I.e.:

export PBS_JOBID=<job.no>.cl1intern user@cl3fr1>vglconnect -s n<node.no>

On the visualisation node you can then execute 64Bit GL applications with the VirtualGL wrapper command

user@vis-node>module load VirtualGL user@vis-node>vglrun <Application command>