- Infos im HLRS Wiki sind nicht rechtsverbindlich und ohne Gewähr -

- Information contained in the HLRS Wiki is not legally binding and HLRS is not responsible for any damages that might result from its use -

CRAY XC40 Graphic Environment: Difference between revisions

No edit summary |

|||

| Line 15: | Line 15: | ||

If you want to use the CRAY XE6 Graphic Hardware e.g. for graphical pre- and post-processing, on way to do it is via a vnc-connection. For that purpose you have to download/install a VNC viewer to/on your local machine. | If you want to use the CRAY XE6 Graphic Hardware e.g. for graphical pre- and post-processing, on way to do it is via a vnc-connection. For that purpose you have to download/install a VNC viewer to/on your local machine. | ||

E.g. TurboVNC comes as a pre-compiled package which can be downloaded from [http://www.virtualgl.org/Downloads/TurboVNC http://www.virtualgl.org/Downloads/TurboVNC]. For Fedora | E.g. TurboVNC comes as a pre-compiled package which can be downloaded from [http://www.virtualgl.org/Downloads/TurboVNC http://www.virtualgl.org/Downloads/TurboVNC]. For Fedora 20 the package tigervnc is available via yum installer. | ||

Other VNC viewers can also be used but we recommend the usage of TurboVNC, an accelerated version of TightVNC | Other VNC viewers can also be used but we recommend the usage of TurboVNC, an accelerated version of TightVNC | ||

| Line 32: | Line 32: | ||

<pre> | <pre> | ||

user@client>ssh | user@client>ssh hornet.hww.de | ||

</pre> | </pre> | ||

Then load the VirtualGL module to setup the VNC environment and launch the VNC startscript. | Then load the VirtualGL module to setup the VNC environment and launch the VNC startscript. | ||

<pre> | <pre> | ||

user@ | user@eslogin00X>module load tools/VirtualGL | ||

user@ | user@eslogin00X>vis_via_vnc.sh | ||

</pre> | </pre> | ||

This will set up a default VNC session with one hour walltime running a TurboVNC server with a resolution of 1240x900. | This will set up a default VNC session with one hour walltime running a TurboVNC server with a resolution of 1240x900. | ||

To control the configuration of the VNC session the vis_via_vnc.sh script has | To control the configuration of the VNC session the vis_via_vnc.sh script has '''optional''' parameters. | ||

<pre> | <pre> | ||

vis_via_vnc.sh [walltime] [geometry] [x0vnc] | vis_via_vnc.sh [walltime] [geometry] [x0vnc] [-msmp] [memory] [-s] | ||

</pre> | </pre> | ||

where walltime can be specified in format hh:mm:ss or only hh. | where walltime can be specified in format hh:mm:ss or only hh. | ||

The geometry option sets the resolution of the Xvnc server launched by the TurboVNC session and has to be given in format | The geometry option sets the resolution of the Xvnc server launched by the TurboVNC session and has to be given in format widhtxheight. | ||

If x0vnc is specified instead of a TurboVNC server with own Xvnc Display a X0-VNC server is started. As its name says this server is directly connected to the :0.0 Display which is contoled by an X-Server always running on the visualisation nodes. A session started with this option will have a | If x0vnc is specified instead of a TurboVNC server with own Xvnc Display a X0-VNC server is started. As its name says this server is directly connected to the :0.0 Display which is contoled by an X-Server always running on the visualisation nodes. A session started with this option will have a fixed resolution which can not be changed. | ||

There is also a -help option which returns the explanation of the parameters and gives examples for script usage. | There is also a --help option which returns the explanation of the parameters and gives examples for script usage. | ||

The vis_via_vnc.sh script returns the name of the node reserved for you, a display number and the IP-address. | The vis_via_vnc.sh script returns the name of the node reserved for you, a display number and the IP-address. | ||

| Line 62: | Line 62: | ||

like stated below | like stated below | ||

<pre> | <pre> | ||

user@client>vncviewer -via | user@client>vncviewer -via hornet.hww.de <node name>:<Display#> | ||

</pre> | </pre> | ||

| Line 75: | Line 75: | ||

== GL applications within the VNC session with TurboVNC == | == GL applications within the VNC session with TurboVNC == | ||

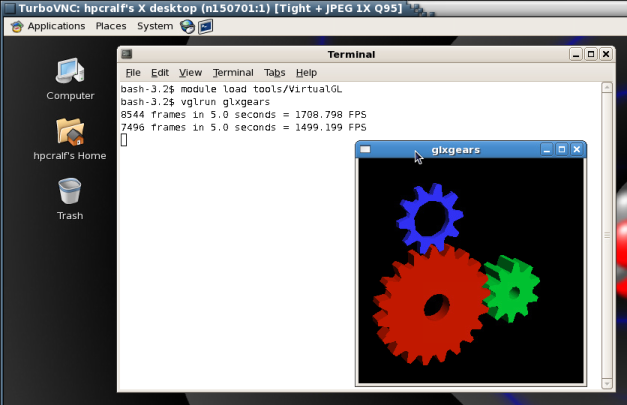

To execute 64Bit GL Applications within | To execute 64Bit GL Applications within the VNC-session you have to open a shell and again load the VirtualGL module to set the VirtualGL environment and then start the application with the VirtualGL wrapper command | ||

<pre> | <pre> | ||

vglrun <Application command> | user@vis-node> module load tools/VirtualGL | ||

user@vis-node> vglrun <Application command> | |||

</pre> | </pre> | ||

Revision as of 12:08, 29 October 2014

For graphical pre- and post-processing purposes 3 visualisation nodes have been integrated into the external nodes of Hornet. The nodes are equipped 512 GB of main memory. Access to a single node is possible by using the node feature mem512gb.

user@eslogin00X> qsub -I -lnodes=1:mem512gb

Another two multi user smp nodes are equipped with 1.5 TB of memory. Access to these nodes is possible by using the node feature smp and the smp queue. Access is scheduled by teh amount of required memory which has to be specified by the vmem feature.

user@eslogin00X> qsub -I -lnodes=1:smp:ppn=1 -q smp -lvmem=5gb

To use the graphic hardware for remote rendering there are currently two ways tested. The first is via TurboVNC the second one is directly via VirtualGL.

VNC Setup

If you want to use the CRAY XE6 Graphic Hardware e.g. for graphical pre- and post-processing, on way to do it is via a vnc-connection. For that purpose you have to download/install a VNC viewer to/on your local machine.

E.g. TurboVNC comes as a pre-compiled package which can be downloaded from http://www.virtualgl.org/Downloads/TurboVNC. For Fedora 20 the package tigervnc is available via yum installer.

Other VNC viewers can also be used but we recommend the usage of TurboVNC, an accelerated version of TightVNC designed for video and 3D applications, since we run the TurboVNC server on the visualisation nodes and the combination of the two components will give the best performance for GL applications.

Preparation of Hornet

Before using vnc for the first time you have to log on to one of the hermit front ends (hermit1.hww.de) and load the module "VirtualGL"

module load tools/VirtualGL

Then, you have to make a .vnc directory in your home directory and run

vncpasswd

which creates a passwd file in the .vnc directory.

Starting the VNC server

To start a VNC session you simply have to login to one of the front ends (hermit1.hww.de) via ssh.

user@client>ssh hornet.hww.de

Then load the VirtualGL module to setup the VNC environment and launch the VNC startscript.

user@eslogin00X>module load tools/VirtualGL user@eslogin00X>vis_via_vnc.sh

This will set up a default VNC session with one hour walltime running a TurboVNC server with a resolution of 1240x900. To control the configuration of the VNC session the vis_via_vnc.sh script has optional parameters.

vis_via_vnc.sh [walltime] [geometry] [x0vnc] [-msmp] [memory] [-s]

where walltime can be specified in format hh:mm:ss or only hh.

The geometry option sets the resolution of the Xvnc server launched by the TurboVNC session and has to be given in format widhtxheight.

If x0vnc is specified instead of a TurboVNC server with own Xvnc Display a X0-VNC server is started. As its name says this server is directly connected to the :0.0 Display which is contoled by an X-Server always running on the visualisation nodes. A session started with this option will have a fixed resolution which can not be changed.

There is also a --help option which returns the explanation of the parameters and gives examples for script usage.

The vis_via_vnc.sh script returns the name of the node reserved for you, a display number and the IP-address.

VNC viewer with -via option

If you got a VNC viewer which supports the via option like TurboVNC or TigerVNC you can simply call the viewer like stated below

user@client>vncviewer -via hornet.hww.de <node name>:<Display#>

VNC viewer without -via option

If you got a VNC viewer without support of the vis option, like JavaVNC or TightVNC for Windows, you have to setup a ssh tunnel via the frontend first and then launch the VNC viewer with a connection to localhost

user@client>ssh -N -L10000:<IP-address of vis-node>:5901 & user@client>vncviewer localhost:10000

Enter the vnc password you set on the frontend and you should get a Gnome session running in your VNC viewer.

GL applications within the VNC session with TurboVNC

To execute 64Bit GL Applications within the VNC-session you have to open a shell and again load the VirtualGL module to set the VirtualGL environment and then start the application with the VirtualGL wrapper command

user@vis-node> module load tools/VirtualGL user@vis-node> vglrun <Application command>

GL applications within the VNC session with X0-VNC

Since the X0-VNC server is directly reading the frame buffer of the visualisation node's graphic card, GL applications can be directly executed within the VNC session without using any wrapping command.

Ending the vnc session

If the vnc session isn't needed any more and the requested walltime isn't expired already you should kill your queue job with

user@eslogin00X>qdel <jour_job_id>

VirtualGL Setup (WITHOUT turbovnc)

To use VirtualGL you have to install it on your local client. It is available in the form of pre-compiled packages at http://www.virtualgl.org/Downloads/VirtualGL.

Linux

After the installation of VirtualGL you can connect to Hornet via the vglconnect command

user@client>vglconnect -s hornet.hww.de

Then call the prepare the visualisation queue job and connect to the reserved visualisation node via the vglconnect command

user@eslogin001>module load tools/VirtualGL user@eslogin001>vis_via_vgl.sh XX:XX:XX user@eslogin001>vglconnect -s vis-node

where XX:XX:XX is the walltime required for your visualisation job and vis-node the hostname of the visualisation node reserved for you.

On the visualisation node you can then execute 64Bit GL applications with the VirtualGL wrapper command

user@vis-node>module load tools/VirtualGL user@vis-node>vglrun <Application command>

Visualisation Software

To use the installed visualisation software please follow the steps described below.

Covise

ParaView

Building Paraview

To build ParaView on hww machines first of all download the source package of the version to be build from http://www.paraview.org/download and extract it to your home directory.

The second step would be to create an empty build directory outside the source directory since an out of source build is the recomended way to compile ParaView.

To build ParaView certain recent dependencies have to be satisfied. For an OpenGL build these are:

- Autotools

- Cmake

- Qt 4

If you plan to build a ParaView server suitable for the Hornet compute nodes also recent versions of

- LLVM with CLang

- libclc and

- Mesa

have to be available.

All of these dependencies are installed on Hornet. Currently the following versions are available:

- m4-1.4.17

- autoconf-2.69

- automake-1.14

- libtool-2.4.2

- pkg-config-0.28

- llvm-3.4.2

- libclc-0.1.5

- Mesa-10.2.2

- Qt-4.8.6

- Cmake-3.0.2

Environment setup

To modify your environment in a way that the dependencies of the OpenGL-Client are usable, source the following bash-script.

setenv-gl.sh

#!/bin/bash # # Setup modules ####################################### module load tools/autotools module load tools/cmake module unload PrgEnv-cray module load PrgEnv-gnu module swap gcc/4.9.1 gcc/4.8.1 # # QT ################################################# export LD_LIBRARY_PATH=/sw/hornet/hlrs/tools/paraview/4.1.0/aux/qt/lib:$LD_LIBRARY_PATH export PATH=/sw/hornet/hlrs/tools/paraview/4.1.0/aux/qt/bin:$PATH #

To modify your environment in a way that the dependencies of the Mesa-Server are usable, source the following bash-script.

setenv-mesa.sh

#!/bin/bash # # Setup modules ####################################### module load tools/autotools module load tools/cmake module unload PrgEnv-cray module load PrgEnv-gnu module swap gcc/4.9.1 gcc/4.8.1 # # LLVM ############################################### export LD_LIBRARY_PATH=/sw/hornet/hlrs/tools/paraview/4.1.0/aux/llvm/lib:$LD_LIBRARY_PATH export PATH=/sw/hornet/hlrs/tools/paraview/4.1.0/aux/llvm/bin:$PATH # # MESA ############################################### export LD_LIBRARY_PATH=/sw/hornet/hlrs/tools/paraview/4.1.0/aux/mesa/lib:$LD_LIBRARY_PATH # # QT ################################################# export LD_LIBRARY_PATH=/sw/hornet/hlrs/tools/paraview/4.1.0/aux/qt/lib:$LD_LIBRARY_PATH export PATH=/sw/hornet/hlrs/tools/paraview/4.1.0/aux/qt/bin:$PATH #

This means, copying one of the statement-sets above to a file e.g. named setenv-pv.sh and executing

source setenv-pv.sh

will make your environment ready to build ParaView.

Source code patches

If you want to use your installation to build custom plugins, you have to patch one of VTK's source to enable the dynamic load of shared libraries during runtime. The file to patch is located in the VTK part of the ParaView source tree.

ParaView-vx.x.x/VTK/Utilities/KWSys/vtksys/DynamicLoader.cxx

The patch is basically disabling the #ifdef section for systems without shared library support. In Paraview version 4.1.0 this can be done by commenting out lines 380 - 422 as stated below.

374 return retval;

375 }

376

377 } // namespace KWSYS_NAMESPACE

378 #endif

379

380 // // ---------------------------------------------------------------

381 // // 5. Implementation for systems without dynamic libs

382 // // __gnu_blrts__ is IBM BlueGene/L

383 // // __LIBCATAMOUNT__ is defined on Catamount on Cray compute nodes

384 // #if defined(__gnu_blrts__) || defined(__LIBCATAMOUNT__) || defined(__CRAYXT_COMPUTE_LINUX_TARGET)

385 // #include <string.h> // for strerror()

386 // #define DYNAMICLOADER_DEFINED 1

387

388 // namespace KWSYS_NAMESPACE

389 // {

390

391 // //----------------------------------------------------------------------------

392 // DynamicLoader::LibraryHandle DynamicLoader::OpenLibrary(const char* libname )

393 // {

394 // return 0;

395 // }

396

397 // //----------------------------------------------------------------------------

398 // int DynamicLoader::CloseLibrary(DynamicLoader::LibraryHandle lib)

399 // {

400 // if (!lib)

401 // {

402 // return 0;

403 // }

404

405 // return 1;

406 // }

407

408 // //----------------------------------------------------------------------------

409 // DynamicLoader::SymbolPointer DynamicLoader::GetSymbolAddress(

410 // DynamicLoader::LibraryHandle lib, const char* sym)

411 // {

412 // return 0;

413 // }

414

415 // //----------------------------------------------------------------------------

416 // const char* DynamicLoader::LastError()

417 // {

418 // return "General error";

419 // }

420

421 // } // namespace KWSYS_NAMESPACE

422 // #endif

423

424 #ifdef __MINT__

425 #define DYNAMICLOADER_DEFINED 1

426 #define _GNU_SOURCE /* for program_invocation_name */

Configuration

To configure and afterwards build ParaView enter your empty build directory and execute ccmake

cd <your_build_directory> ccmake -Wno-dev <paraview_source_directory>

Press c for intial configuration. After cmake has done the initial configuration change the build parameters according to your needs. For the installation

BUILD_DOCUMENTATION : OFF BUILD_EXAMPLES : OFF BUILD_SHARED_LIBS : ON BUILD_TESTING : OFF CMAKE_BUILD_TYPE : Release PARAVIEW_ENABLE_PYTHON : ON PARAVIEW_INSTALL_DEVELOPMENT_F : ON PARAVIEW_USE_MPI : ON

Depending whether the OpenGL-Client or the Mesa-Server should be build the prefix is:

CMAKE_INSTALL_PREFIX : /opt/hlrs/tools/paraview/4.1.0/OpenGL

or

CMAKE_INSTALL_PREFIX : /opt/hlrs/tools/paraview/4.1.0/Mesa

Press c for configuration. After the configuration has finished you should encounter the following error:

CMake Error at VTK/CMake/vtkTestingMPISupport.cmake:31 (message): MPIEXEC was empty. Call Stack (most recent call first): VTK/Parallel/MPI/CMakeLists.txt:4 (include)

Press e to exit the error display, press t to toogle advanced options and change the following build parameters.

MPIEXEC : aprun MPIEXEC_MAX_NUMPROCS : 24 MPI_Fortran_COMPILER : /opt/cray/craype/2.2.0/bin/ftn Module_vtkFiltersParallelGeome : ON Module_vtkFiltersSelection : ON Module_vtkIOMPIParallel : ON Module_vtkImagingMath : ON Module_vtkImagingStatistics : ON Module_vtkImagingStencil : ON VTK_Group_Imaging : ON VTK_Group_MPI : ON CMAKE_SHARED_LINKER_FLAGS : -z muldefs

After changing these parameters you have to press c two times. After two configuration runs you will get a new option [g] to generate.

Press g to generate the makefiles an exit cmake.

If you want to build the Mesa-Server another non interactive configuration has to be done by executing the following command.

To build the OpenGL-Client you have to skip this step.

cmake \

<paraview_source_directory> \

-DPARAVIEW_BUILD_QT_GUI=OFF \

-DVTK_USE_X=OFF \

-DOPENGL_INCLUDE_DIR=/sw/hornet/hlrs/tools/paraview/4.1.0/aux/mesa/include \

-DOPENGL_gl_LIBRARY=/sw/hornet/hlrs/tools/paraview/4.1.0/aux/mesa/lib/libOSMesa.so \

-DOPENGL_glu_LIBRARY=/usr/lib/libGLU.so \

-DVTK_OPENGL_HAS_OSMESA=ON \

-DOSMESA_INCLUDE_DIR=/sw/hornet/hlrs/tools/paraview/4.1.0/aux/mesa/include \

-DOSMESA_LIBRARY=/sw/hornet/hlrs/tools/paraview/4.1.0/aux/mesa/lib/libOSMesa.so

Build

To build, login to a mom node e.g.

ssh mom12

Then compile and install with

make make install

Using Paraview

Serial client on pre-/postprocessing nodes

Allocate a pre-/postprocesisng node with the vis_via_vnc.sh script provided by the tools/VirtualGL module. On an eslogin node execute:

module laod tools/VirtualGL vis_via_vnc.sh [walltime] [geometry]

Connect to the VNC-session by one of the methodes issued by the script. Within the VNC-session open a terminal and execute:

module laod tools/VirtualGL module load tools/paraview/4.1.0-Pre vglrun paraview

Launch parallel server on compute nodes

In order to launch a parallel pvserver on the Hornet compute nodes, request the number of compute nodes you need

qsub -I -lnodes=XX:ppn=YY -lwalltime=hh:mm:ss -N PVServer

On the mom node do:

module load tools/paraview/4.1.0-parallel-Mesa aprun -n XX*YY -N YY pvserver

Once the server is running it should respond with something like:

Waiting for client... Connection URL: cs://nidxxxxx:portno Accepting connection(s): nidxxxxx:portno

showing hat it is ready to be connected to an interactive client. Where nidxxxxx is the actual name of the headnode the pvserver is running on and portno is the port number the pvserver on the headnode is listening to waiting for a client to perform the connection handshake.

Connect to parallel server from multi user smp nodes

To connect interactively to a parallel pvserver running on the compute nodes first of all allocate yourself a VNC-session on one of the multi user smp nodes by using the -msmp option of the vis_via_vnc.sh script.

module load tools/VirtualGL vis_via_vnc.sh 01:00:00 1910x1010 -msmp

Connect to the VNC-session by one of the methodes issued by the script. Within the VNC-session open a terminal and execute:

module load tools/paraview/4.1.0-Pre pvconnect -pvs nidxxxxx:portno -via networkX

Where nidxxxxx:portno is the string returned by the pvserver with "Accepting connection(s):" and networkX is the name of the mom node to which your qsub for the pvserver was diverted to. For academic users this is one of network1 to network6. The pvconnect command will launch a paraview client that is already connected to the specified pvserver.