- Infos im HLRS Wiki sind nicht rechtsverbindlich und ohne Gewähr -

- Information contained in the HLRS Wiki is not legally binding and HLRS is not responsible for any damages that might result from its use -

CRAY XE6 Hardware and Architecture: Difference between revisions

From HLRS Platforms

Jump to navigationJump to search

m (added R134a) |

|||

| (16 intermediate revisions by 3 users not shown) | |||

| Line 1: | Line 1: | ||

== Hardware of Installation step 1 == | == Hardware of Installation step 1 == | ||

=== Summary === | === Summary Phase 1 Step 1 === | ||

* 3552 [ | * Cray [http://www.cray.com/Products/Computing/XE/XE6.aspx XE6] supercomputer named [http://en.wikipedia.org/wiki/Osmoderma_eremita Hermit] | ||

** | * Peak performance for the whole system: about 1 PFLOP/s (3552*294.4=1045708.8 GFLOP/s) | ||

* total compute node RAM: 126 TB (3072*32+480*64=129024 GB) | |||

* 3552 dual socket G34 [http://docs.cray.com/cgi-bin/craydoc.cgi?mode=View;id=S-2496-4001;right=/books/S-2496-4001/html-S-2496-4001//appendix.3.Yp4mYMuW.html '''compute nodes'''] / 113.664 cores | |||

** [http://www.amd.com/de/products/server/processors/6000-series-platform/Pages/6000-series-platform.aspx AMD Opteron(tm)] 6276 (Interlagos) processors (2 per node) | |||

*** an Interlagos CPU is composed of 2 Orchi Dies;<br />an Orchi Die consists of 4 Bulldozer modules<br />(see also sketch [[#InterlagosSchematics|below]]) | |||

*** 2*4*2= 16 Cores/CPU @ 2.3 GHz (up to 3.2 GHz with TurboCore) | |||

*** 32MB L2+L3 Cache, 16MB L3 Cache | *** 32MB L2+L3 Cache, 16MB L3 Cache | ||

*** HyperTransport HT3 | *** 2*2*2 channels of DDR3 PC3-12800 bandwidth to 8 DIMMs (4GB each)<br />(2*12.8=25.6 GB/s dual channel Orchi data rate, 51.2 GB/s CPU quad channel data rate, 2*51.2=102.4 GB/s per node) | ||

*** Peak performance: 2.3*4*16=147.2 GFLOP/s per | *** Direct Connect Architecture 2.0 with HyperTransport HT3: 6.4 GT/s*16 Byte/Transfer= 102.4 GB/s | ||

** standard | *** supports ISA extensions SSE4.1, SSE4.2, SSSE3, AVX, AES, PCLMULQDQ, FMA4 and XOP | ||

*** Flex FP: Bulldozer modules (2 cores) share a single 2*128= 256bit floating point unit | |||

*** Peak performance per socket: 2.3*4*16= 147.2 GFLOP/s | |||

** Peak performance per node: 2*147.2= 294.4 GFLOP/s | |||

** 3072 standard nodes (86.5%) equipped with 32GB RAM/node (2GB/Core for 98304 Cores);<br /> 480 nodes (13.5%) equipped with 64GB memory (4GB/Core for 15360 Cores) | |||

** stream benchmark shows about 65 GB/s data transfer for a node | |||

* 96 '''service nodes''' (Network nodes, mom nodes, router nodes, DVS nodes, boot, database, syslog) | * 96 '''service nodes''' (Network nodes, mom nodes, router nodes, DVS nodes, boot, database, syslog) | ||

* High Speed Network '''CRAY Gemini''' | * High Speed Network '''CRAY Gemini''' | ||

| Line 19: | Line 28: | ||

** external login servers | ** external login servers | ||

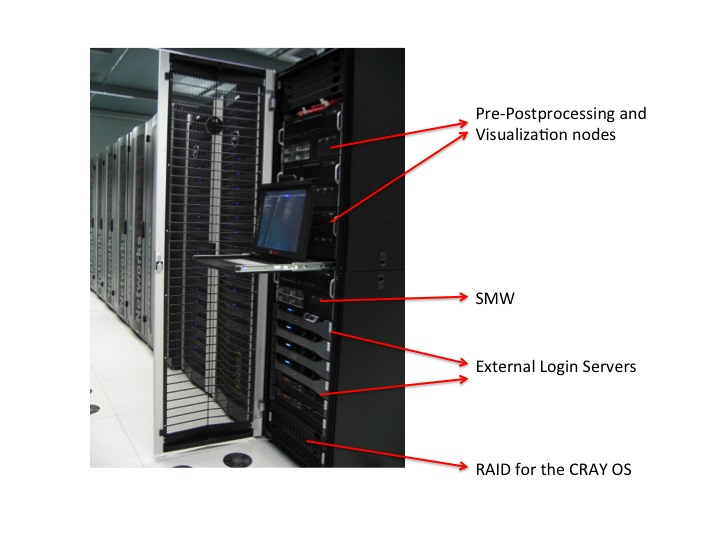

** pre-post processing and visualization nodes | ** pre-post processing and visualization nodes | ||

*** 128GB | *** 4x [http://ark.intel.com/products/46498/Intel-Xeon-Processor-X7550-18M-Cache-2_00-GHz-6_40-GTs-Intel-QPI Intel Xeon X7550] (Nehalem EX OctCore), 2.00GHz (4*8=32 Cores for 32*2=64 HyperThreads) | ||

*** one node comes with 1TB memory | *** 128GB RAM | ||

*** one node comes with 1TB memory (shared usage) | |||

*** local disks | *** local disks | ||

*** | *** [http://www.nvidia.de/object/product-quadro-6000-de.html Quadro 6000] rev 2.0 (GF100 Fermi) GPU, 14 SM, 448 Cuda Cores, 6 GB GDDR5 RAM (384bit Interface mit 144 GB/s) | ||

*** direct access to parallel filesytem | *** direct access to parallel filesytem | ||

* infrastructure servers | * infrastructure servers | ||

| Line 40: | Line 50: | ||

* compute nodes and pre-post processing nodes | * compute nodes and pre-post processing nodes | ||

** are only available for user using the [[CRAY_XE6_Using_the_Batch_System| batch system]] and the Application Level Placement Scheduler (ALPS), see [ | ** are only available for user using the [[CRAY_XE6_Using_the_Batch_System| batch system]] and the Application Level Placement Scheduler (ALPS), see [http://docs.cray.com/cgi-bin/craydoc.cgi?mode=View;id=S-2496-4001;right=/books/S-2496-4001/html-S-2496-4001//cnl_apps.html running applications]. | ||

*** There are compute nodes with 32 GB memory and 64 GB memory available each with fast interconnect (CRAY Gemini) | *** There are compute nodes with 32 GB memory and 64 GB memory available each with fast interconnect (CRAY Gemini) | ||

*** The pre- and postprocessing/visualization infrastructure aims to support users with | *** The pre- and postprocessing/visualization infrastructure aims to support users with | ||

| Line 52: | Line 62: | ||

=== AMD Opteron 6200 Series Processor (Interlagos) === | === AMD Opteron 6200 Series Processor (Interlagos) === | ||

[[Image:Interlagos.jpg]] | <div id="InterlagosSchematics">[[Image:Interlagos.jpg]]</div> | ||

=== AMD Turbo Core technology === | === AMD Turbo Core technology === | ||

| Line 65: | Line 75: | ||

=== Software Features === | === Software Features === | ||

* Cray Linux Environment (CLE) 4 | * Cray Linux Environment (CLE) 4 operating system | ||

* Operating System is based on SUSE Linux Enterprise Server (SLES) 11 | * Operating System is based on SUSE Linux Enterprise Server (SLES) 11 | ||

* Cray Gemini interconnection network | * Cray Gemini interconnection network | ||

* [ | * [http://docs.cray.com/cgi-bin/craydoc.cgi?mode=View;id=S-2496-4001;right=/books/S-2496-4001/html-S-2496-4001//chapter-9b6qil6d-craigf.html Cluster Compatibility Mode (CCM)] functionality enables cluster-based independent software vendor (ISV) applications to run without modification on Cray systems. | ||

* Batch System: torque, moab | * Batch System: torque, moab | ||

* many development tools available: | * many development tools available: | ||

** [https://fs.hlrs.de/projects/craydoc/ | ** [https://fs.hlrs.de/projects/craydoc/docs_merged/books/S-2396-601/html-S-2396-601/chapter-xpb5m1kn-brbethke.html Compiler]: Cray, PGI, GNU, | ||

** [https://fs.hlrs.de/projects/craydoc/ | ** [https://fs.hlrs.de/projects/craydoc/docs_merged/books/S-2396-601/html-S-2396-601/chapter-acu9eycn-brbethke.html Debugging]: DDT, ATP,... | ||

** [https://fs.hlrs.de/projects/craydoc/ | ** [https://fs.hlrs.de/projects/craydoc/docs_merged/books/S-2396-601/html-S-2396-601/chapter-o8nktdbi-brbethke.html Optimizing Code]: CrayPat, Cray Apprentice, PAPI | ||

** [https://fs.hlrs.de/projects/craydoc/ | ** [https://fs.hlrs.de/projects/craydoc/docs_merged/books/S-2396-601/html-S-2396-601/chapter-ck3x6qu8-brbethke.html Libraries]: BLAS, LAPACK, FFTW, PETSc, MPT,.... | ||

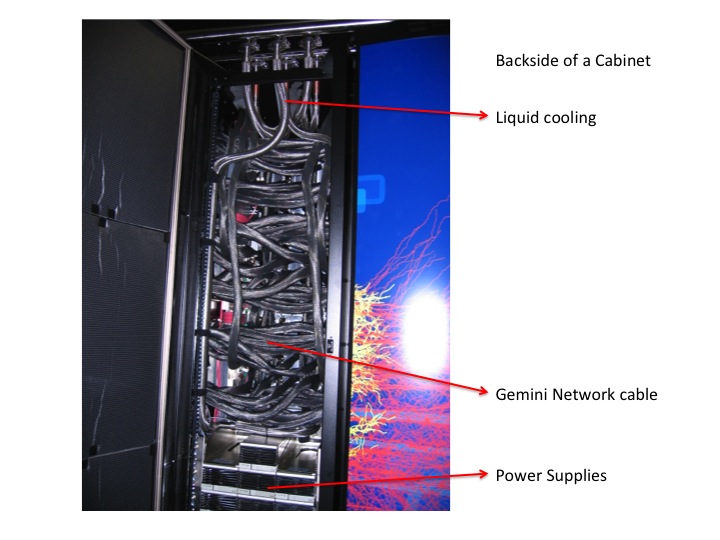

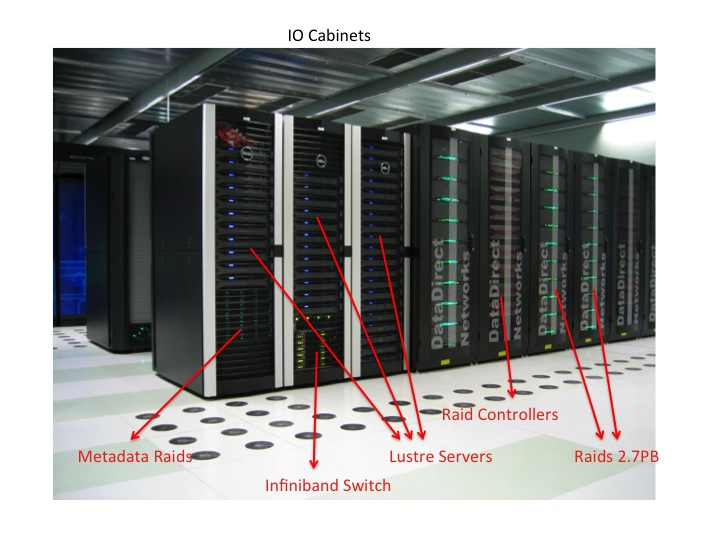

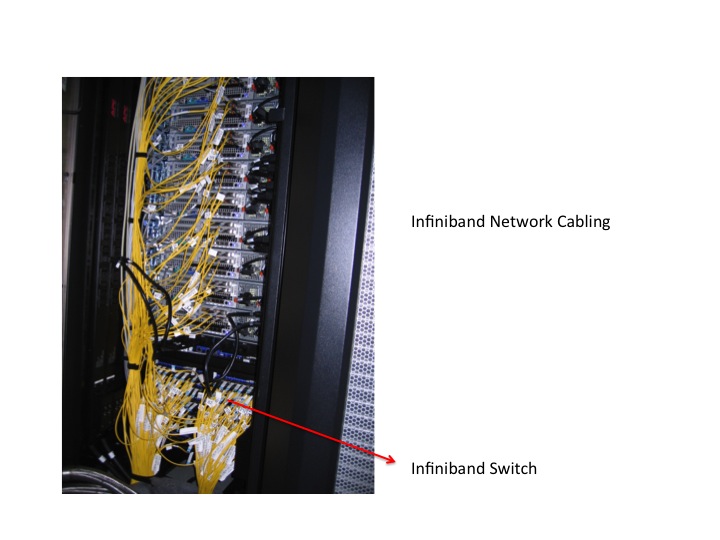

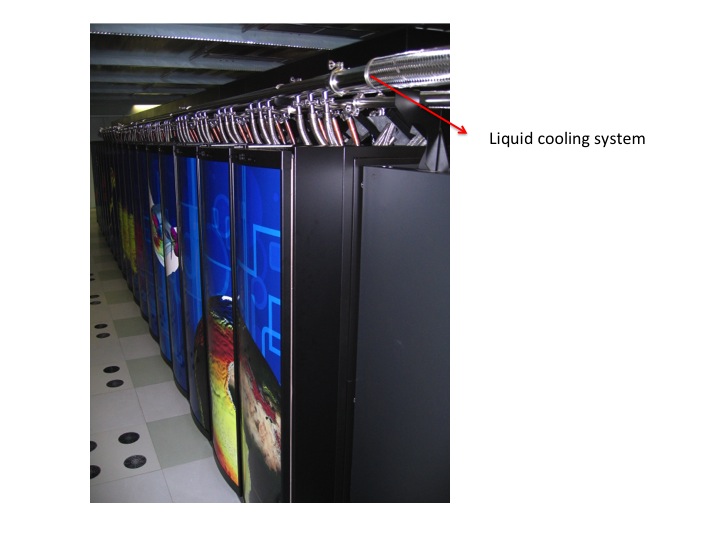

== Pictures of installation step 1 == | == Pictures and video of installation step 1 == | ||

[http://www.youtube.com/watch?v=3qirlkHRKR0 Video of the installation] | |||

[[Image:Hermit1-Folie1.jpg]] | [[Image:Hermit1-Folie1.jpg]] | ||

| Line 83: | Line 95: | ||

[[Image:Hermit1-Folie4.jpg]] | [[Image:Hermit1-Folie4.jpg]] | ||

[[Image:Hermit1-Folie5.jpg]] | [[Image:Hermit1-Folie5.jpg]] | ||

Cooling Liquid: R134a ([https://en.wikipedia.org/wiki/1,1,1,2-Tetrafluoroethane Tetrafluoroethane]) | |||

[[Image:Hermit1-Folie6.jpg]] | [[Image:Hermit1-Folie6.jpg]] | ||

[[Image:Hermit1-Folie7.jpg]] | [[Image:Hermit1-Folie7.jpg]] | ||

[[Image:Hermit1-Folie8.jpg]] | [[Image:Hermit1-Folie8.jpg]] | ||

[[Image:Hermit1-Folie9.jpg]] | [[Image:Hermit1-Folie9.jpg]] | ||

Latest revision as of 11:18, 18 November 2016

Hardware of Installation step 1

Summary Phase 1 Step 1

- Cray XE6 supercomputer named Hermit

- Peak performance for the whole system: about 1 PFLOP/s (3552*294.4=1045708.8 GFLOP/s)

- total compute node RAM: 126 TB (3072*32+480*64=129024 GB)

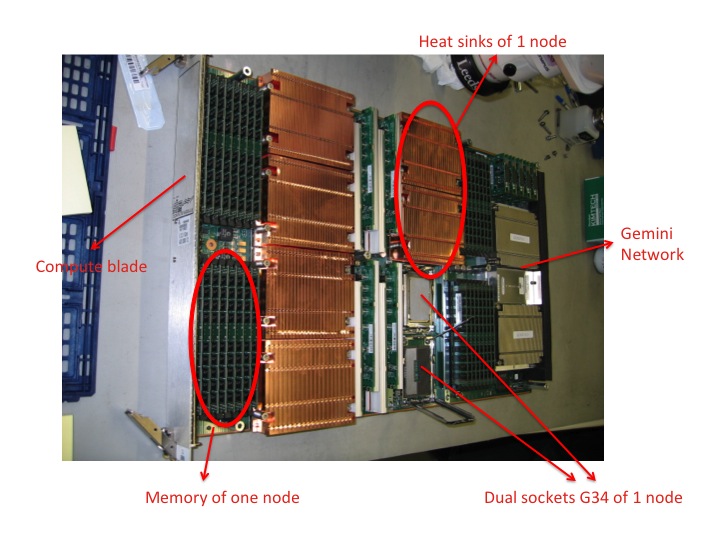

- 3552 dual socket G34 compute nodes / 113.664 cores

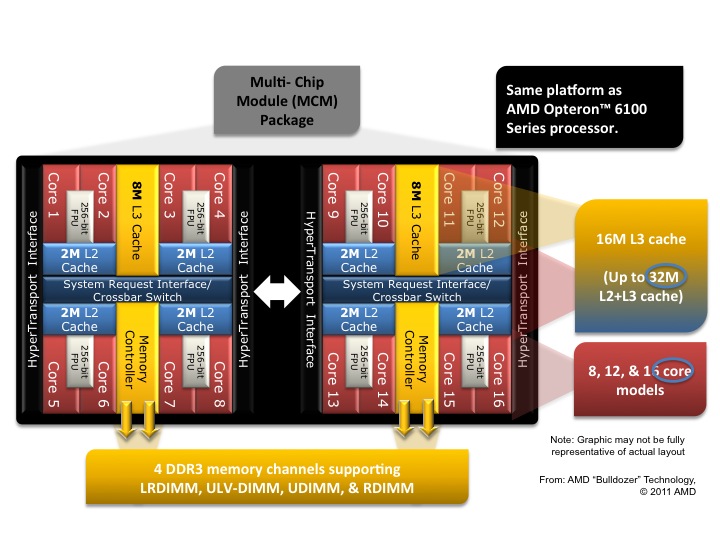

- AMD Opteron(tm) 6276 (Interlagos) processors (2 per node)

- an Interlagos CPU is composed of 2 Orchi Dies;

an Orchi Die consists of 4 Bulldozer modules

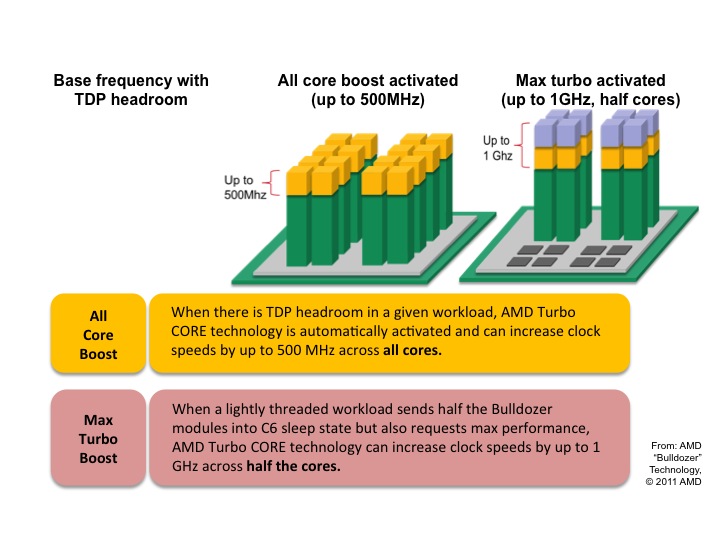

(see also sketch below) - 2*4*2= 16 Cores/CPU @ 2.3 GHz (up to 3.2 GHz with TurboCore)

- 32MB L2+L3 Cache, 16MB L3 Cache

- 2*2*2 channels of DDR3 PC3-12800 bandwidth to 8 DIMMs (4GB each)

(2*12.8=25.6 GB/s dual channel Orchi data rate, 51.2 GB/s CPU quad channel data rate, 2*51.2=102.4 GB/s per node) - Direct Connect Architecture 2.0 with HyperTransport HT3: 6.4 GT/s*16 Byte/Transfer= 102.4 GB/s

- supports ISA extensions SSE4.1, SSE4.2, SSSE3, AVX, AES, PCLMULQDQ, FMA4 and XOP

- Flex FP: Bulldozer modules (2 cores) share a single 2*128= 256bit floating point unit

- Peak performance per socket: 2.3*4*16= 147.2 GFLOP/s

- an Interlagos CPU is composed of 2 Orchi Dies;

- Peak performance per node: 2*147.2= 294.4 GFLOP/s

- 3072 standard nodes (86.5%) equipped with 32GB RAM/node (2GB/Core for 98304 Cores);

480 nodes (13.5%) equipped with 64GB memory (4GB/Core for 15360 Cores) - stream benchmark shows about 65 GB/s data transfer for a node

- AMD Opteron(tm) 6276 (Interlagos) processors (2 per node)

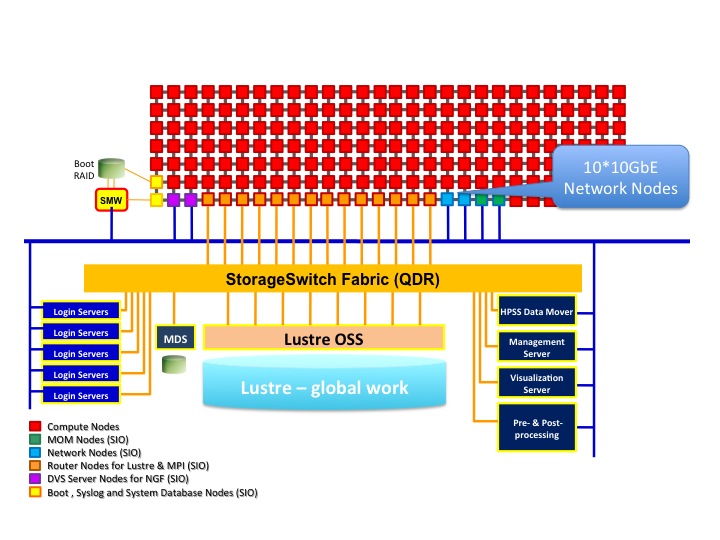

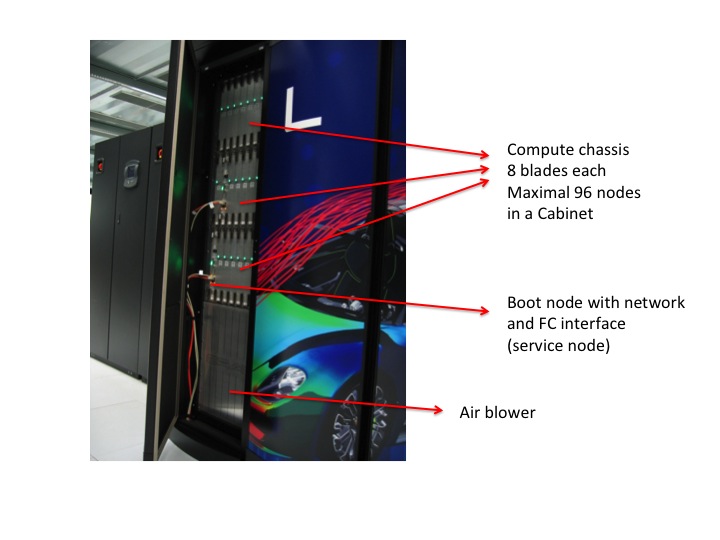

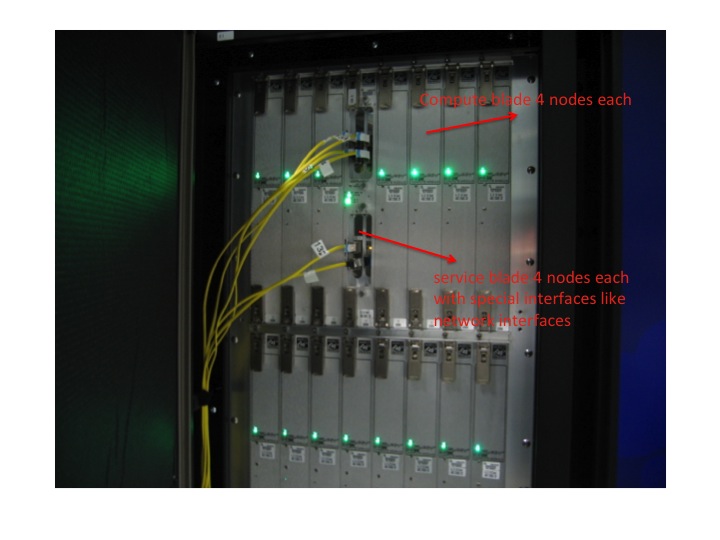

- 96 service nodes (Network nodes, mom nodes, router nodes, DVS nodes, boot, database, syslog)

- High Speed Network CRAY Gemini

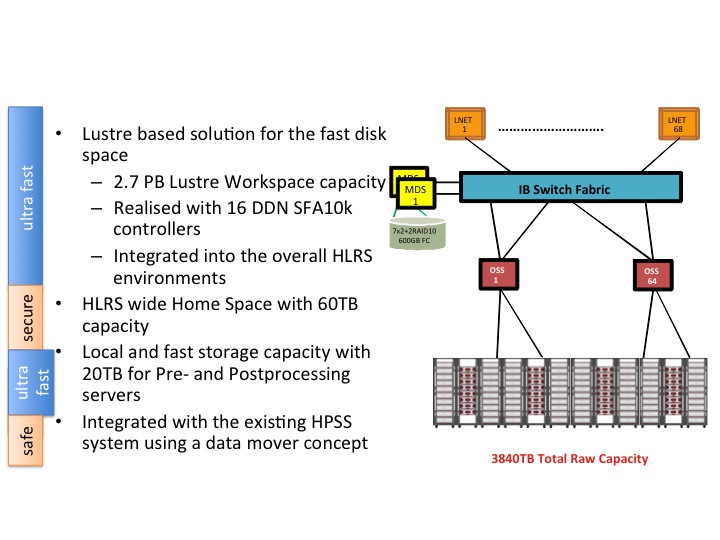

- users HOME filesystem:

- ~60TB (BLUEARC mercury 55)

- workspace filesystem:

- Lustre parallel filesystem

- capacity 2.7 PB realized with 16 DDN SFA10K controllers

- IO bandwith ~150GB/s

- special user nodes:

- external login servers

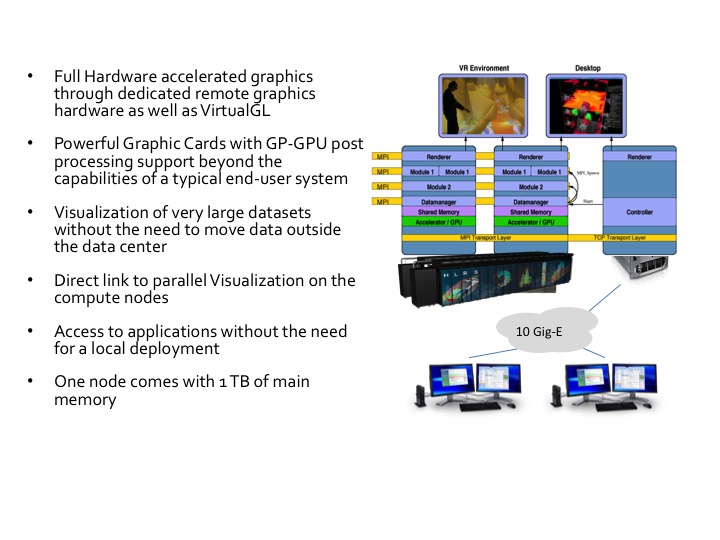

- pre-post processing and visualization nodes

- 4x Intel Xeon X7550 (Nehalem EX OctCore), 2.00GHz (4*8=32 Cores for 32*2=64 HyperThreads)

- 128GB RAM

- one node comes with 1TB memory (shared usage)

- local disks

- Quadro 6000 rev 2.0 (GF100 Fermi) GPU, 14 SM, 448 Cuda Cores, 6 GB GDDR5 RAM (384bit Interface mit 144 GB/s)

- direct access to parallel filesytem

- infrastructure servers

Architecture

- System Management Workstation (SMW)

- system administrator's console for managing a Cray system like monitoring, installing/upgrading software, controls the hardware, starting and stopping the XE6 system.

- service nodes are classified in:

- login nodes for users to access the system

- boot nodes which provides the OS for all other nodes, licenses,...

- network nodes which provides e.g. external network connections for the compute nodes

- Cray Data Virtualization Service (DVS): is an I/O forwarding service that can parallelize the I/O transactions of an underlying POSIX-compliant file system.

- sdb node for services like ALPS, torque, moab, cray management services,...

- I/O nodes for e.g. lustre

- MOM (torque) nodes for placing user jobs of the batch system in to execution

- compute nodes and pre-post processing nodes

- are only available for user using the batch system and the Application Level Placement Scheduler (ALPS), see running applications.

- There are compute nodes with 32 GB memory and 64 GB memory available each with fast interconnect (CRAY Gemini)

- The pre- and postprocessing/visualization infrastructure aims to support users with

- complex workflows and advanced access methods

- remote graphics rendering simulation steering in order to minimize data move operations.

- are only available for user using the batch system and the Application Level Placement Scheduler (ALPS), see running applications.

Conceptual Architecture

AMD Opteron 6200 Series Processor (Interlagos)

AMD Turbo Core technology

Storage Solution for Hermit installation step 1

Pre-Postprocessing Visualization Server

Software Features

- Cray Linux Environment (CLE) 4 operating system

- Operating System is based on SUSE Linux Enterprise Server (SLES) 11

- Cray Gemini interconnection network

- Cluster Compatibility Mode (CCM) functionality enables cluster-based independent software vendor (ISV) applications to run without modification on Cray systems.

- Batch System: torque, moab

- many development tools available:

- Compiler: Cray, PGI, GNU,

- Debugging: DDT, ATP,...

- Optimizing Code: CrayPat, Cray Apprentice, PAPI

- Libraries: BLAS, LAPACK, FFTW, PETSc, MPT,....

Pictures and video of installation step 1

Cooling Liquid: R134a (Tetrafluoroethane)

Cooling Liquid: R134a (Tetrafluoroethane)