- Infos im HLRS Wiki sind nicht rechtsverbindlich und ohne Gewähr -

- Information contained in the HLRS Wiki is not legally binding and HLRS is not responsible for any damages that might result from its use -

Vampir: Difference between revisions

m (Adapt hint about handle-able trace file size for vampir) |

|||

| (11 intermediate revisions by 2 users not shown) | |||

| Line 13: | Line 13: | ||

== Usage == | == Usage == | ||

In order to use Vampir, You first need to generate a trace of Your application, | Vampir consists of a GUI interface and a analysis backend. In order to use Vampir, You first need to generate a trace of Your application, preferably using [[Vampirtrace | VampirTrace]]. | ||

The Open Trace Format (OTF) trace consists of a file for each MPI process (<tt>*.events.z</tt>) a trace definition file (<tt>*.def.z</tt>) and the master trace file (<tt>*.otf</tt>) describing the other files. Fore details how to generate OTF traces see [[Vampirtrace]]. | |||

=== Vampir === | |||

To analyze small traces (< 10 GB of trace data), you can use Vampir standalone with the default backend: | |||

To | |||

{{Command|command= | {{Command|command= | ||

module load performance/vampir | module load performance/vampir<br> | ||

vampir | vampir | ||

}} | }} | ||

=== VampirServer === | |||

VampirServer | For large-scale traces (> 10GB and up to many thousand MPI processes), use the parallel VampirServer backend (on compute nodes allocated through the queuing system), and attach to it using vampir. | ||

Most likely you will select the number of nodes based on the trace file size and the memory available on a single node. However, you may need more than two times the memory to hold the trace. | |||

To start the server get an interactive node first. To run the vampirserver for 1 hour on 4 nodes of HAWK with 512 processes: | |||

{{Command|command=qsub -I -lselect=4:mpiprocs=128,walltime=1:0:0 | |||

module load vampirserver # on vulcan use module load performance/vampirserver instead | |||

{{Command | vampirserver start -n $((512 - 1)) | ||

| command = module load performance/vampirserver | |||

}} | }} | ||

{{Warning|text=The number of analysis processes must not exceed the number of processes requested minus one!}} | |||

This will show you a connection host and port: | |||

<pre> | |||

VampirServer 9.8.0 | |||

{{ | |||

| | |||

The | |||

This will show you a connection host and port: | |||

<pre> | |||

VampirServer | |||

Licensed to HLRS | Licensed to HLRS | ||

Running | Running 511 analysis processes. (abort with vampirserver stop 66) | ||

Server listens on: r15c1t7n1:30000 | |||

</pre> | </pre> | ||

From this output note down the server name and port as well as the command to stop the vampirserver. | |||

Now open a new shell, and login to one of the login nodes of the system via ssh (don't forget X-forwarding) and open vampir. | |||

{{Command|command=module load vampir # on vulcan module load performance/vampir | |||

vampir | |||

}} | |||

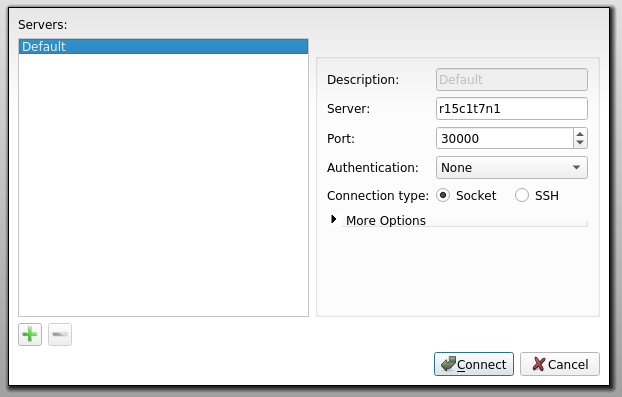

Select open other and then chose "Remote File". In the opening window enter the server name and port displayed by VampirServer before. Proceed and select the trace you want to open. | |||

[[Image:vampir_remote_open.png|Example of remote open on Nehalem-Cluster]] | |||

== See also == | == See also == | ||

Latest revision as of 13:53, 30 April 2020

| The Vampir suite of tools offers scalable event analysis through a nice GUI which enables a fast and interactive rendering of very complex performance data. The suite consists of Vampirtrace, Vampir and Vampirserver. Ultra large data volumes can be analyzed with a parallel version of Vampirserver, loading and analysing the data on the compute nodes with the GUI of Vampir attaching to it.

Vampir is based on standard QT and works on desktop Unix workstations as well as on parallel production systems. The program is available for nearly all platforms like Linux-based PCs and Clusters, IBM, SGI, SUN. NEC, HP, and Apple. |

| ||||||||||||

Usage

Vampir consists of a GUI interface and a analysis backend. In order to use Vampir, You first need to generate a trace of Your application, preferably using VampirTrace. The Open Trace Format (OTF) trace consists of a file for each MPI process (*.events.z) a trace definition file (*.def.z) and the master trace file (*.otf) describing the other files. Fore details how to generate OTF traces see Vampirtrace.

Vampir

To analyze small traces (< 10 GB of trace data), you can use Vampir standalone with the default backend:

vampir

VampirServer

For large-scale traces (> 10GB and up to many thousand MPI processes), use the parallel VampirServer backend (on compute nodes allocated through the queuing system), and attach to it using vampir. Most likely you will select the number of nodes based on the trace file size and the memory available on a single node. However, you may need more than two times the memory to hold the trace.

To start the server get an interactive node first. To run the vampirserver for 1 hour on 4 nodes of HAWK with 512 processes:

module load vampirserver # on vulcan use module load performance/vampirserver instead

vampirserver start -n $((512 - 1))This will show you a connection host and port:

VampirServer 9.8.0 Licensed to HLRS Running 511 analysis processes. (abort with vampirserver stop 66) Server listens on: r15c1t7n1:30000

From this output note down the server name and port as well as the command to stop the vampirserver.

Now open a new shell, and login to one of the login nodes of the system via ssh (don't forget X-forwarding) and open vampir.

Select open other and then chose "Remote File". In the opening window enter the server name and port displayed by VampirServer before. Proceed and select the trace you want to open.