- Infos im HLRS Wiki sind nicht rechtsverbindlich und ohne Gewähr -

- Information contained in the HLRS Wiki is not legally binding and HLRS is not responsible for any damages that might result from its use -

Vampir: Difference between revisions

(→Usage) |

|||

| Line 13: | Line 13: | ||

== Usage == | == Usage == | ||

In order to use Vampir, You first need to generate a trace of Your application, | Vampir consists of a GUI interface and a analysis backend. In order to use Vampir, You first need to generate a trace of Your application, preferably using [[Vampirtrace | VampirTrace]]. | ||

The Open Trace Format (OTF) trace consists of a file for each MPI process (<tt>*.events.z</tt>) a trace definition file (<tt>*.def.z</tt>) and the master trace file (<tt>*.otf</tt>) describing the other files. Fore details how to generate OTF traces see [[Vampirtrace]]. | |||

To analyze small traces (< 500 MB of trace data), you can use Vampir standalone with the default backend: | |||

To | |||

{{Command|command= | {{Command|command= | ||

module load performance/vampir | module load performance/vampir | ||

| Line 53: | Line 41: | ||

Please note, that <tt>vampirserver-core</tt> is memory-bound and may work best if started with only one MPI process per node, or one per socket, e.g. use Open MPI's option to mpirun <tt>-bysocket</tt>. | Please note, that <tt>vampirserver-core</tt> is memory-bound and may work best if started with only one MPI process per node, or one per socket, e.g. use Open MPI's option to mpirun <tt>-bysocket</tt>. | ||

== VampirServer == | == VampirServer == | ||

Vampir-Server is the parallel version of the graphical trace file analyzing software. The tool consists of a client and a server. The server itself is a parallel MPI Program. | Vampir-Server is the parallel version of the graphical trace file analyzing software. The tool consists of a client and a server. The server itself is a parallel MPI Program. | ||

Revision as of 14:06, 25 January 2013

| The Vampir suite of tools offers scalable event analysis through a nice GUI which enables a fast and interactive rendering of very complex performance data. The suite consists of Vampirtrace, Vampir and Vampirserver. Ultra large data volumes can be analyzed with a parallel version of Vampirserver, loading and analysing the data on the compute nodes with the GUI of Vampir attaching to it.

Vampir is based on standard QT and works on desktop Unix workstations as well as on parallel production systems. The program is available for nearly all platforms like Linux-based PCs and Clusters, IBM, SGI, SUN. NEC, HP, and Apple. |

| ||||||||||||

Usage

Vampir consists of a GUI interface and a analysis backend. In order to use Vampir, You first need to generate a trace of Your application, preferably using VampirTrace. The Open Trace Format (OTF) trace consists of a file for each MPI process (*.events.z) a trace definition file (*.def.z) and the master trace file (*.otf) describing the other files. Fore details how to generate OTF traces see Vampirtrace.

To analyze small traces (< 500 MB of trace data), you can use Vampir standalone with the default backend:

For large-scale traces (up to many thousand MPI processes), use the parallel VampirServer (on compute nodes allocated through the queuing system), and attach to it using vampir:

then on the head-node use the Vampir-GUI to "Remote open" the same file by attaching to the heavy-weight, compute nodes:

Please note, that vampirserver-core is memory-bound and may work best if started with only one MPI process per node, or one per socket, e.g. use Open MPI's option to mpirun -bysocket.

VampirServer

Vampir-Server is the parallel version of the graphical trace file analyzing software. The tool consists of a client and a server. The server itself is a parallel MPI Program.

First load the necessary software module

Now start the server and afterwards the client

on laki:

on hermit:

Remember the port number which will be displays as you will need it later on. Then start the graphical client

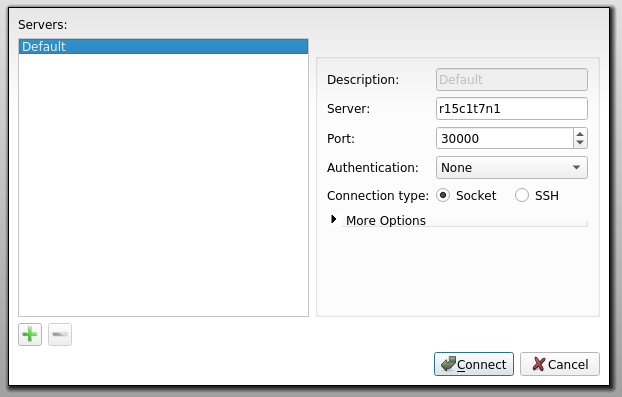

The last step is to connect the client to the server using the port number offered you from the server. This is done under

file <math>\rightarrow</math> Connect to Server ...

Then you can open your traces via

file <math>\rightarrow</math> Open Tracefile ...

Select the desired *.otf file

Hermit special

Request an interactive session

Load the vampirserver module

Start the vampirserver on the compute nodes using

This will show you a connection host and port:

Launching VampirServer... VampirServer 7.6.2 (r7777) Licensed to HLRS Running 31 analysis processes... (abort with vampirserver stop 10) VampirServer <10> listens on: nid03447:30000

Now forward this to one of the login nodes:

Open a new terminal and login to the login node selected in the command before:

Now you can proceed as described in section Vampir