- Infos im HLRS Wiki sind nicht rechtsverbindlich und ohne Gewähr -

- Information contained in the HLRS Wiki is not legally binding and HLRS is not responsible for any damages that might result from its use -

CRAY XC30 Using the Batch System SLURM

Introduction

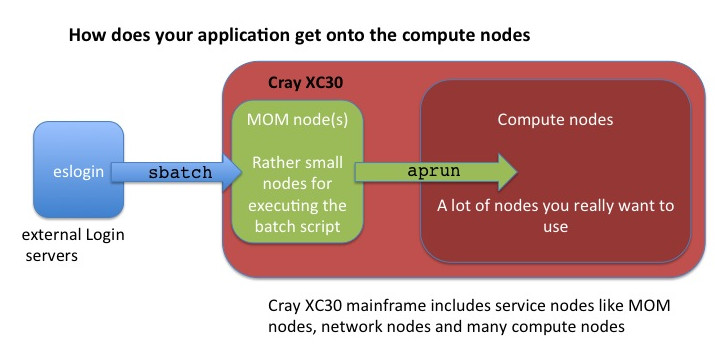

The only way to start a parallel job on the compute nodes of this system is to use the batch system. The installed batch system is based on

- the resource management system SLURM (Simple Linux Utility for Resource Management)

SLURM is designed to operate as a job scheduler over Cray's Application Level Placement Scheduler (ALPS). Use SLURM's sbatch or salloc commands to create a resource allocation in ALPS. Then use ALPS aprun command to launch parallel jobs within the resource allocation. Additional you have to know on CRAY XC30 the user applications are always launched on the compute nodes using the application launcher, aprun, which submits applications to the Application Level Placement Scheduler (ALPS) for placement and execution!

Detailed information about how to use this system and many examples can be found in Cray Programming Environment User's Guide and Workload Management and Application Placement for the Cray Linux Environment.

- ALPS is always used for scheduling a job on the compute nodes. It does not care about the programming model you used. So we need a few general definitions :

- PE : Processing Elements, basically an Unix ‘Process’, can be a MPI Task, CAF image, UPC tread, ...

- numa_node The cores and memory on a node with ‘flat’ memory access, basically one of the 2 Dies on the Intel and the direct attach memory.

- Thread A thread is contained inside a process. Multiple threads can exist within the same process and share resources such as memory, while different PEs do not share these resources. Most likely you will use OpenMP threads.

- aprun is the ALPS (Application Level Placement Scheduler) application launcher

- It must be used to run application on the XC compute nodes interactively and in a batch job

- If aprun is not used, the application is launched on the MOM node (and will most likely fail)

- aprun man page contains several useful examples at least 3 important parameter to control:

- The total number of PEs: -n

- The number of PEs per node: -N

- The number of OpenMP threads: -d (the 'stride' between 2 PEs in a node

- sbatch is the SLURM submission command for batch job scripts.

Writing a batch submission script is typically the most convenient way to submit your job to the batch system.

You generally interact with the batch system in two ways: through options specified in job submission scripts (these are detailed below in the examples) and by using slurm commands on the login nodes. There are some main commands used to interact with slurm:

- sbatch is used to submit a job script for later execution.

- scancel is used to cancel a pending or running job or job step. It can also be used to send an arbitrary signal to all processes associated with a running job or job step.

- sinfo reports the state of partitions and nodes managed by SLURM. It has a wide variety of filtering, sorting, and formatting options.

- squeue reports the state of jobs or job steps. It has a wide variety of filtering, sorting, and formatting options. By default, it reports the running jobs in priority order and then the pending jobs in priority order.

- srun is used to submit a job for execution or initiate job steps in real time.

- salloc is used to allocate resources for a job in real time.

- sacct is used to report job or job step accounting information about active or completed jobs.

Check the man page of slurm and ALPS for more advanced commands and options

man slurm man aprun

or read the SLURM Documentation

Requesting Resources with SLURM

Requesting the required resources for a batch job

- The number of required nodes and cores can be determined by the parameters in the job script header with "#SBATCH" before any executable commands in the script.

#SBATCH --job-name=MYJOB #SBATCH --nodes=1 #SBATCH --time=00:10:00

- The job is submitted by the sbatch command (all script head parameters #SBATCH can also be submitted directly by sbatch command options).

- The batch script is not necessarily granted resources immediately, it may sit in the queue of pending jobs for some time before its required resources become available.

- At the end of the execution output and error files are returned to submission directory

Other SLURM options

- File names of stdout and stderr

#SBATCH --output=std.out #SBATCH --error=std.err

- Start N PEs (tasks)

#SBATCH --ntasks=N

- Start M tasks per node. Total Nodes used are N/M (+1 if mod(N,M)!=0). Max of M=32

#SBATCH --ntasks-per-node=M

- Number of threads per tasks. Most used together with OMP_NUM_THREADS

#SBATCH --cpus-per-task=8

Understanding aprun

SLURM and ALPS

The following SLURM options are translated to these aprun options. SLURM options not listed below have no equivalent aprun translation and while the option can be used for the allocation within Slurm it will not be propagated to aprun.

| SLURM options | aprun options | |||||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

|

|

|

- The following aprun options have no equivalent in srun and must be specified by using the SLURM --launcher-opts

options: -a, -b, -B, -cc, -f, -r, and -sl.

- -B will provide aprun with the SLURM settings for -n, -N , -d and -m

aprun -B ./a.out

Core specialization

System 'noise' on compute nodes may significantly degrade scalability for some applications. The Core Specialization can mitigate this problem.

- 1 core per node will be dedicated for system work (service core)

- As many system interrupts as possible will be forced to execute on the service core

- The application will not run on the service core

To get core specialization use aprun -r

aprun -r1 -n 100 a.out

highest numbered cores will be used, starting with 31 on current nodes. (independent on aprun -j setting)

apcount provided to compute total number of cores required

man apcount

Hyperthreading

Cray compute nodes are always booted with hyperthreading on ON. User can choose to run with one or two PEs or threads per core. The default is to run with 1. You can make your choice at runtime :

aprun –n### -j1 … -> Single Stream mode, one rank per core

aprun –n### -j2 … -> Dual Stream mode, two ranks per core

The numbering of the cores in single stream mode is 0-7 for die 0 and 8-15 for die 1. If using dual stream mode the numbering of the first 15 cores stays the same and cores 16-23 are on die 0 and 24-31 on die 1. Note that this make the numbering of the cores in hypterthread mode is not contigues :

| Mode | cores on die 0 | cores on die 1 | ||||||

|---|---|---|---|---|---|---|---|---|

|

|

|

aprun CPU Affinity control

CLE can dynamically distribute work by allowing PEs and threads to migrate from one CPU to another within a node. In some cases, moving PEs or threads from CPU to CPU increases cache and translation lookaside buffer (TLB) misses and therefore reduces performance. The CPU affinity options enable to bind a PE or thread to a particular CPU or a subset of CPUs on a node.

- aprun CPU affinity options (see also man aprun)

- Default settings: -cc cpu (PEs are bound a to specific core, depended on the –d setting)

- Binding PEs to a specific numa node : -cc numa_node (PEs are not bound to a specific core but cannot ‘leave’ their numa_node)

- No binding: -cc none

- Own binding: -cc 0,4,3,2,1,16,18,31,9,....

aprun Memory Affinity control

Cray XC30 systems use dual-socket compute nodes with 2 dies. For 16-CPU Cray XC30 compute node processors, NUMA nodes 0 and 1 have eight CPUs each (logical CPUs 0-7, 8-15 respectively). If your applications use Intel Hyperthreading Technology, it is possible to use up to 32 processing elements (logical CPUs 16-23 are on NUMA node 0 and CPUs 24-31 are on NUMA node 1). Even if you PE and threads are bound to a specific numa_node, the memory used does not have to be ‘local’

- aprun memory affinity options (see also man apron)

- Suggested setting is –ss (a PE can only allocate the memory local to its assigned NUMA node. If this is not possible, your application will crash.)

Some basic aprun examples

Assuming a XC30 with Sandybridge nodes (32 cores per node with Hyperthreading)

Pure MPI application , using all the available cores in a node

aprun -n 32 -j2 ./a.out

Pure MPI application, using only 1 core per node

32 MPI tasks, 32 nodes with 32*32 core allocated can be done to increase the available memory for the MPI tasks

aprun -N 1 -n 32 -d 32 -j2 ./a.out

Hybrid MPI/OpenMP application, 4 MPI ranks per node

32 MPI tasks, 8 OpenMP threads each need to set OMP_NUM_THREADS

export OMP_NUM_THREADS=8 aprun -n 32 -N 4 -d $OMP_NUM_THREADS -j2

MPI and OpenMP with Intel PE

Intel RTE creates one extra thread when spawning the worker threads. This makes the pinning for aprun more difficult.

Suggestions:

- Running when “depth” divides evenly into the number of “cpus” on a socket

export OMP_NUM_THREADS=“<=depth” aprun -n npes -d “depth” -cc numa_node a.out

- Running when “depth” does not divide evenly into the number of “cpus” on a socket

export OMP_NUM_THREADS=“<=depth” aprun -n npes -d “depth” -cc none a.out

Multiple Program Multiple Data (MPMD)

aprun supports MPMD – Multiple Program Multiple Data.

- Launching several executables which all are part of the same MPI_COMM_WORLD

aprun –n 128 exe1 : -n 64 exe2 : -n 64 exe3

- Notice : Each exacutable needs a dedicated node, exe1 and exe2 cannot share a node.

Example : The following commands needs 3 nodes

aprun –n 1 exe1 : -n 1 exe2 : -n 1 exe3

- Use a script to start several serial jobs on a node :

aprun –a xt –n 1 –d 32 –cc none script.sh

>cat script.sh ./exe1& ./exe2& ./exe3& wait >

cpu_lists for each PE

CLE was updated to allow threads and processing elements to have more flexibility in placement. This is ideal for processor architectures whose cores share resources with which they may have to wait to utilize. Separating cpu_lists by colons (:) allows the user to specify the cores used by processing elements and their child processes or threads. Essentially, this provides the user more granularity to specify cpu_lists for each processing element.

Here an example with 3 threads :

aprun -n 4 -N 4 -cc 1,3,5:7,9,11:13,15,17:19,21,23

Running a batch application with SLURM and ALPS

Submitting batch jobs

The number of required nodes, required runtime (wall clock time), job name, threads,.... can be specified in the job header or using sbatch options on command line. Here is a very simple Hybrid MPI + OpenMP job example how to do this (assuming the batch job script is named "myjob.script":

#!/bin/bash #SBATCH --job-name=hybrid #SBATCH --ntasks=64 #SBATCH --ntasks-per-node=4 #SBATCH --cpus-per-task=4 export OMP_NUM_THREADS=4 aprun –n64 –d4 –N4 –j1 a.out

The job is submitted by the sbatch command on one of the login node:

sbatch --time=00:20:00 myjob.script

This job will allocate 64 PEs for 20 minutes in total. Each PE will run 4 OMP threads. Only 4 PEs are running on each node. On our system hornet, the nodes are allocated exclusively (on each node only 1 batch job). So, the job above allocates 16 nodes.

Monitoring batch jobs

- squeue

- shows batch jobs

- sinfo

- view information about SLURM nodes and partitions.

- xtnodestat

- Shows XC nodes allocation and aprun processes

- All allocations are shown: both job running with aprun and batch dedicated nodes

- apstat

- Shows aprun processes status

- apstat overview

- apstat -a[ apid] info about all the applications or a specific one

- apstat -n info about the status of the nodes

Deleting batch jobs

- scancel

Environment variables

The SLURM controller will set the following variables in the environment of the batch script.

- BASIL_RESERVATION_ID The reservation ID on Cray systems running ALPS/BASIL only.

- SLURM_CPU_BIND Set to value of the --cpu_bind option.

- SLURM_JOB_ID (and SLURM_JOBID for backwards compatibility) The ID of the job allocation.

- SLURM_JOB_CPUS_PER_NODE Count of processors available to the job on this node. Note the select/linear plugin allocates entire nodes to jobs, so the value indicates the total count of CPUs on the node. The select/cons_res plugin allocates individual processors to jobs, so this number indicates the number of processors on this node allocated to the job.

- SLURM_JOB_DEPENDENCY Set to value of the --dependency option.

- SLURM_JOB_NAME Name of the job.

- SLURM_JOB_NODELIST (and SLURM_NODELIST for backwards compatibility) List of nodes allocated to the job.

- SLURM_JOB_NUM_NODES (and SLURM_NNODES for backwards compatibility) Total number of nodes in the job's resource allocation.

- SLURM_MEM_BIND Set to value of the --mem_bind option.

- SLURM_TASKS_PER_NODE Number of tasks to be initiated on each node. Values are comma separated and in the same order as SLURM_NODELIST. If two or more consecutive nodes are to have the same task count, that count is followed by "(x#)" where "#" is the repetition count. For example, "SLURM_TASKS_PER_NODE=2(x3),1" indicates that the first three nodes will each execute three tasks and the fourth node will execute one task.

- SLURM_NTASKS_PER_CORE Number of tasks requested per core. Only set if the --ntasks-per-core option is specified.

- SLURM_NTASKS_PER_NODE Number of tasks requested per node. Only set if the --ntasks-per-node option is specified.

- SLURM_NTASKS_PER_SOCKET Number of tasks requested per socket. Only set if the --ntasks-per-socket option is specified.

- SLURM_RESTART_COUNT If the job has been restarted due to system failure or has been explicitly requeued, this will be sent to the number of times the job has been restarted.

- SLURM_SUBMIT_DIR The directory from which sbatch was invoked.

Example: getting the expanded NODELIST inside a batch job

scontrol show hostname $SLURM_JOB_NODELIST

Examples

Starting 512 MPI tasks (PEs)

#!/bin/bash #SBATCH --job-name=MPIjob #SBATCH --ntasks=512 #SBATCH --ntasks-per-node=32 #SBATCH --time=01:00:00 export MPICH_ENV_DISPLAY=1 export MPICH_VERSION_DISPLAY=1 export MPICH_RANK_REORDER_DISPLAY=1 export MALLOC_MMAP_MAX_=0 export MALLOC_TRIM_THRESHOLD_=-1 aprun -n 512 –cc cpu –ss –j2 ./a.out

Starting an OpenMP program, using a single node

#!/bin/bash #SBATCH --job-name=OpenMP #SBATCH --ntasks=1 #SBATCH --cpus-per-task=32 #SBATCH --time=01:00:00 export MPICH_ENV_DISPLAY=1 export MPICH_VERSION_DISPLAY=1 export MPICH_RANK_REORDER_DISPLAY=1 export MALLOC_MMAP_MAX_=0 export MALLOC_TRIM_THRESHOLD_=536870912 # large value export OMP_NUM_THREADS=32 aprun –n1 –d $OMP_NUM_THREADS –cc cpu –ss –j2 ./a.out

Starting a hybrid job single node, 4 MPI tasks, each with 8 threads

#!/bin/bash #SBATCH --job-name=hybrid #SBATCH --ntasks=4 #SBATCH --ntasks-per-node=4 #SBATCH --cpus-per-task=8 #SBATCH --time=01:00:00 export MPICH_ENV_DISPLAY=1 export MPICH_VERSION_DISPLAY=1 export MPICH_RANK_REORDER_DISPLAY=1 export MALLOC_MMAP_MAX_=0 export MALLOC_TRIM_THRESHOLD_=-1 export OMP_NUM_THREADS=8 aprun –n4 –N4 –d $OMP_NUM_THREADS –cc cpu –ss –j2 ./a.out

Starting a hybrid job single node, 8 MPI tasks, each with 2 threads

#!/bin/bash #SBATCH --job-name=hybrid #SBATCH --ntasks=8 #SBATCH --ntasks-per-node=8 #SBATCH --cpus-per-task=2 #SBATCH --time=01:00:00 export MPICH_ENV_DISPLAY=1 export MPICH_VERSION_DISPLAY=1 export MPICH_RANK_REORDER_DISPLAY=1 export MALLOC_MMAP_MAX_=0 export MALLOC_TRIM_THRESHOLD_=-1 export OMP_NUM_THREADS=2 aprun –n8 –N8 –d $OMP_NUM_THREADS –cc cpu –ss –j1 ./a.out

Starting an MPI job on two nodes using only every second core

#!/bin/bash #SBATCH --job-name=mpi #SBATCH --ntasks=16 #SBATCH --ntasks-per-node=8 #SBATCH --cpus-per-task=2 #SBATCH --time=01:00:00 export MPICH_ENV_DISPLAY=1 export MPICH_VERSION_DISPLAY=1 export MPICH_RANK_REORDER_DISPLAY=1 aprun –n16 –N8 –d 2 –cc cpu –ss –j1 ./a.out

Starting a hybrid job on two nodes using only every second core

#!/bin/bash #SBATCH --job-name=hybrid #SBATCH --ntasks=32 #SBATCH --ntasks-per-node=16 #SBATCH --cpus-per-task=2 #SBATCH --time=01:00:00 export MPICH_ENV_DISPLAY=1 export MPICH_VERSION_DISPLAY=1 export MPICH_RANK_REORDER_DISPLAY=1 export OMP_NUM_THREADS=2 aprun –j2 –n32 –N16 –d $OMP_NUM_THREADS –cc 0,2:4,6:8,10:12,14:16,18:20,22:24,26:28,30 –ss ./a.out

Starting a MPMD job using 1 master with 32 threads and 16 workers each with 8 threads

#!/bin/bash

#SBATCH --job-name=mpmd

#SBATCH --nodes=5 ! Note : 5 nodes * 32 cores = 160 cores

#SBATCH --ntasks-per-node=32

#SBATCH --time=01:00:00

export MPICH_ENV_DISPLAY=1

export MPICH_VERSION_DISPLAY=1

export MPICH_RANK_REORDER_DISPLAY=1

export MALLOC_MMAP_MAX_=0

export MALLOC_TRIM_THRESHOLD_=-1

export OMP_NUM_THREADS=8

# The following command should be in a single line,

# splitted to more easy to read

aprun env OMP_NUM_THREADS=16 –n1 –d16 –N1 –j1 ./master.exe :

-n16 –N4 –d$OMP_NUM_THREADS –cc cpu –ss –j2 ./worker.exe