- Infos im HLRS Wiki sind nicht rechtsverbindlich und ohne Gewähr -

- Information contained in the HLRS Wiki is not legally binding and HLRS is not responsible for any damages that might result from its use -

CRAY XC40 Using the Batch System

Introduction

The only way to start a parallel job on the compute nodes of this system is to use the batch system. The installed batch system is based on

- the resource management system torque and

- the scheduler moab

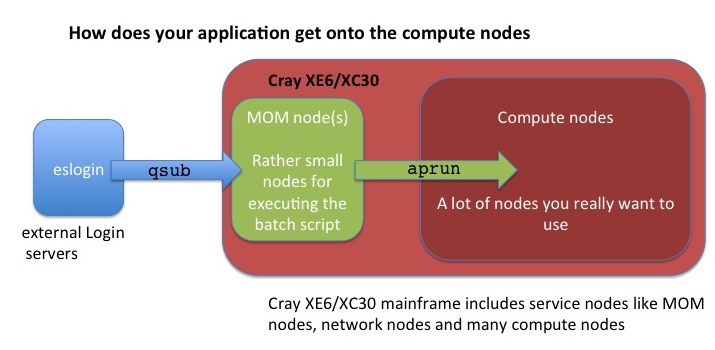

Additionally you have to know that on CRAY XE6/XC40 systems the user applications are always launched on the compute nodes using the application launcher, aprun, which submits applications to the Application Level Placement Scheduler (ALPS) for placement and execution.

Detailed information for CRAY XC40 about how to use this system and many examples can be found in Cray Programming Environment User's Guide and Workload Management and Application Placement for the Cray Linux Environment.

- ALPS is always used for scheduling a job on the compute nodes. It does not care about the programming model you used. So we need a few general definitions :

- PE : Processing Elements, basically an Unix ‘Process’, can be a MPI Task, CAF image, UPC thread, ...

- numa_node The cores and memory on a node with ‘flat’ memory access, basically one of the 2 Dies on the Intel and the direct attach memory.

- Thread A thread is contained inside a process. Multiple threads can exist within the same process and share resources such as memory, while different PEs do not share these resources. Most likely you will use OpenMP threads.

- aprun is the ALPS (Application Level Placement Scheduler) application launcher

- It must be used to run application on the XE/XC compute nodes interactively and in a batch job

- If aprun is not used, the application is launched on the MOM node (and will most likely fail)

- aprun man page contains several useful examples at least 3 important parameter to control:

- The total number of PEs: -n

- The number of PEs per node: -N

- The number of OpenMP threads: -d (the 'stride' between 2 PEs in a node)

- see also understanding aprun

- qsub is the torque submission command for batch job scripts.

Writing a submission script is typically the most convenient way to submit your job to the batch system.

You generally interact with the batch system in two ways: through options specified in job submission scripts (these are detailed below in the examples) and by using torque or moab commands on the login nodes. There are three key commands used to interact with torque:

- qsub

- qstat

- qdel

Check the man page of torque for more advanced commands and options

man pbs

Requesting Resources using batch system TORQUE and ALPS

Batch Mode

Production jobs are typically run in batch mode. Batch scripts are shell scripts containing flags and commands to be interpreted by a shell and are used to run a set of commands in sequence.

- The number of required nodes, cores, wall time and more can be determined by the parameters in the job script header with "#PBS" before any executable commands in the script.

#!/bin/bash #PBS -N job_name #PBS -l nodes=2:ppn=24 #PBS -l walltime=00:20:00 # Change to the direcotry that the job was submitted from cd $PBS_O_WORKDIR # Launch the parallel job to the allocated compute nodes aprun -n 48 -N 24 ./my_mpi_executable arg1 arg2 > my_output_file 2>&1

- The job is submitted by the qsub command (all script head parameters #PBS can also be adjusted directly by qsub command options).

qsub my_batchjob_script.pbs

- Setting qsub options on the command line will overwrite the settings given in the batch script:

qsub -N other_name -l nodes=2:ppn=24,walltime=00:20:00 my_batchjob_script.pbs

- The batch script is not necessarily granted resources immediately, it may sit in the queue of pending jobs for some time before its required resources become available.

- At the end of the execution output and error files are returned to the submission directory

- This example will run your executable "my_mpi_executable" in parallel with 48 MPI processes. Torque will allocate 2 nodes to your job for a maximum time of 20 minutes and place 24 processes on each node (one per core). The batch systems allocates nodes exclusively only for one job. After the walltime limit is exceeded, the batch system will terminate your job. The job launcher for the XC40 parallel jobs (both MPI and OpenMP) is aprun. This needs to be started from a subdirectory of the /mnt/lustre_server (your workspace). The aprun example above will start the parallel executable "my_mpi_executable" with the arguments "arg1" and "arg2". The job will be started using 48 MPI processes with 24 processes placed on each of your allocated nodes (remember that a node consists of 24 cores in the XC40 system). You need to have nodes allocated by the batch system (qsub) before starting aprun.

To query further options of aprun, please use

man aprun aprun -h

Interactive batch Mode

Interactive mode is typically used for debugging or optimizing code but not for running production code. To begin an interactive session, use the "qsub -I" command:

qsub -I -l nodes=2:ppn=24,walltime=00:30:00

If the requested resources are available and free (in the example above: 2 nodes/24 cores, 30 minutes), then you will get a new session on the mom node for your requested resources. Now you have to use the aprun command to launch your application to the allocated compute nodes. When you are finished, enter logout to exit the batch system and return to the normal command line.

Notes

- Remember, you use aprun within the context of a batch session and the maximum size of the job is determined by the resources you requested when you launched the batch session. You cannot use the aprun command to use more resources than you reserved using the qsub command. Once a batch session begins, you can only use the resources initially requested or less resources.

- While your job is running (in Batch Mode), STDOUT and STDERR are written to a file or files in a system directory and the output is copied to your submission directory only after the job completes. Specifying the "qsub -j oe" option here and redirecting the output to a file (see examples above) makes it possible for you to view STDOUT and STDERR while the job is running.

Run job on other Account ID

There are Unix groups associated to the project account ID (ACID). To run a job on a non-default project budget (associated to a secondary group), the groupname of this project has to be passed in the group_list:

qsub -W group_list=<groupname> ...

To get your available groups:

id -Gn

Usage of a Reservation

For nodes which are reserved for special groups or users, you need to specify an additional option for this reservation:

- E.g. a reservation named john.1 will be used with following command:

qsub -W x=FLAGS:ADVRES:john.1 ...

Deleting a Batch Job

qdel <jobID> canceljob <jobID>

These commands allow you to remove jobs from the job queue. If the job is running, qdel will abort it. You can obtain the Job ID from the output of command "qstat" or you remember the output of your qsub command of your job.

Status Information

* Status of jobs: qstat qstat -a showq

- Status of Qeues:

qstat -q qstat -Q

- Status of job scheduling

checkjob <jobID> showstart <jobID>

- Status of backfill. This can help you to build small jobs that can be backfilled immediately while you are waiting for the resources to become available for your larger jobs

showbf

- Status of Nodes/System (see also Gathering Application Status and Information on the Cray System)

xtnodestat apstat

Note: for further details type on the login node:

man qstat man apstat man xtnodestat showbf -h showq -h checkjob -h showstart -h

- Resource Utilization Reporting (RUR) is a tool for gathering statistics on how system resources are being used by applications. AT HLRS RUR is configured to write a single file in user home directory: rur.out. The content of the file is the output of each plugin used by RUR. The plugins are: "taskstats", "energy" and "timestamp".

- At the end of you job output file you will find resource informations like:

Application 6640730 resources: utime ~49s, stime ~5s, Rss ~8504, inblocks ~15127, outblocks ~2498

where:

- utime: user time used

- stime: system time used

- Rss: maximum resident set size (memory)

- inblocks: block input operations

- outblocks: block output operations

The values are summed from all app processes.

Limitations

Understanding aprun

Core specialization

System 'noise' on compute nodes may significantly degrade scalability for some applications. The Core Specialization can mitigate this problem.

- 1 core per node will be dedicated for system work (service core)

- As many system interrupts as possible will be forced to execute on the service core

- The application will not run on the service core

To get core specialization use aprun -r

aprun -r1 -n 100 a.out

thus one core per node is used for system work and 23 cores are available for computation.

Furthermore, the tool apcount computes total number of cores required for a given setup using specialization cores. For further instructions see

man apcount

Hyperthreading

Cray XC40 compute nodes are always booted with hyperthreading switched ON. Users can choose to run with one or two PEs or threads per core. The default is to run with 1. You can make your choice at runtime :

aprun –n### -j1 … -> Single Stream mode, one rank per core (default)

aprun –n### -j2 … -> Dual Stream mode, two ranks per core

The numbering of the cores in single stream mode is 0-11 for die 0 and 12-23 for die 1. If using dual stream mode the numbering of the first 24 cores stays the same and cores 24-35 are on die 0 and 36-47 on die 1. Note that this makes the numbering of the cores in hypterthread mode not contiguous:

| Mode | cores on die 0 | cores on die 1 | ||||||

|---|---|---|---|---|---|---|---|---|

|

|

|

Note: the cores are assigned consecutive, which means in hyperthread mode: 0,24,1,25,...,11,35,12,36,...,23,47.

aprun CPU Affinity control

CLE can dynamically distribute work by allowing PEs and threads to migrate from one CPU to another within a node. In some cases, moving PEs or threads from CPU to CPU increases cache and translation lookaside buffer (TLB) misses and therefore reduces performance. The CPU affinity options enable to bind a PE or thread to a particular CPU or a subset of CPUs on a node.

- aprun CPU affinity options (see also man aprun)

- Default settings: -cc cpu (PEs are bound a to specific core, depended on the –d setting)

- Binding PEs to a specific numa node : -cc numa_node (PEs are not bound to a specific core but cannot ‘leave’ their numa_node)

- No binding: -cc none

- Own binding: -cc 0,4,3,2,1,16,18,31,9,....

aprun Memory Affinity control

Cray XC40 systems use dual-socket compute nodes with 2 dies. For 24-CPU Cray XC40 compute node processors, NUMA nodes 0 and 1 have 12 CPUs each (logical CPUs 0-11, 12-23 respectively). If your applications use Intel Hyperthreading Technology, it is possible to use up to 48 processing elements (logical CPUs 0-11 as well as 24-35 are on NUMA node 0 and CPUs 12-23 as well as 36-48 are on NUMA node 1). Even if your PE and threads are bound to a specific numa_node, the memory used does not have to be ‘local’

- aprun memory affinity options (see also man aprun)

- Suggested setting is –ss (a PE can only allocate the memory local to its assigned NUMA node. If this is not possible, your application will crash.)

Some basic aprun examples

Assuming a XC40 with Haswell nodes (48 cores per node with Hyperthreading)

Pure MPI application , using all the available cores in a node

aprun -n 48 -N 48 -j2 ./a.out

Pure MPI application, using only 1 core per node

24 MPI tasks, 24 nodes with 24*24 core allocated can be done to increase the available memory for the MPI tasks

aprun -n 24 -N 1 -d24 ./a.out

Hybrid MPI/OpenMP application, 4 MPI ranks per node

24 MPI tasks, 12 OpenMP threads each need to set OMP_NUM_THREADS

export OMP_NUM_THREADS=12 aprun -n 24 -N 4 -d $OMP_NUM_THREADS -j2

MPI and OpenMP with Intel PE

Intel RTE creates one extra thread when spawning the worker threads. This makes the correct, efficient, pinning more difficult for aprun. In the default setting this extra thread is scheduled as second thread. In the default setting (OMP_NUM_THREADS=$omps and aprun -d $num_d) the threads are scheduled round robin, the extra thread on the second cpu, while at the end two application threads (first and last one) are both placed on the first cpu. This results in a significant performance degradation.

But this extra thread usually has no significant workload. Thus, this extra thread does not influence the performance of an application thread, when it is located on the same cpu.

Thus, we suggest adding the -cc depth option. As a result, all threads can migrate with respect to the specified cpumask. For example when using:

export OMP_NUM_THREADS=$omps aprun -n $npes -N $ppn -d $OMP_NUM_THREADS -cc depth a.out

all $omps computational threads will be located each on a single cpu and the extra thread on one of these.

Multiple Program Multiple Data (MPMD)

aprun supports MPMD – Multiple Program Multiple Data.

- Launching several executables which all are part of the same MPI_COMM_WORLD

aprun –n 96 exe1 : -n 48 exe2 : -n 48 exe3

- Notice : Each executable needs a dedicated node, exe1 and exe2 cannot share a node.

Example : The following command needs 3 nodes

aprun –n 1 exe1 : -n 1 exe2 : -n 1 exe3

- Use a script to start several serial jobs on a node :

aprun –a xt –n 1 –d 24 –cc none script.sh

>cat script.sh ./exe1& ./exe2& ./exe3& wait >

cpu_lists for each PE

CLE was updated to allow threads and processing elements to have more flexibility in placement. This is ideal for processor architectures whose cores share resources with which they may have to wait to utilize. Separating cpu_lists by colons (:) allows the user to specify the cores used by processing elements and their child processes or threads. Essentially, this provides the user more granularity to specify cpu_lists for each processing element.

Here an example with 3 threads :

aprun -n 4 -N 4 -cc 1,3,5:7,9,11:13,15,17:19,21,23

Job Examples

This batch script template serves as basis for the aprun expamples given later.

#! /bin/bash #PBS -N <job_name> #PBS -l nodes=<number_of_nodes>:ppn=24 #PBS -l walltime=00:01:00 cd $PBS_O_WORKDIR # This is the directory where this script and the executable are located. # You can choose any other directory on the lustre file system. export OMP_NUM_THREADS=<nt> <aprun_command>

The keywords <job_name>, <number_of_nodes>, <nt>, and <aprun_command>

have to be replaced and the walltime adapted accordingly (one minute is given in the

template above). The OMP_NUM_THREADS environment variable is only important for

applications using OpenMP. Please note that OpenMP directives are recognized by default

by the Cray compiler and can be turned off by the -hnoomp option. For the Intel, GNU, and PGI

compiler one has to use the corresponding flag to enable OpenMP recognition.

The following parameters for the template above should cover the vast majority of applications and are given for both the XE6 and XC40 platform at HLRS. The <exe> keyword should be replaced by your application.

Job types

The compute nodes of the XC40 platform Hazelhen feature two Haswell processors with 12 cores and one NUMA domain each resulting in a total of 24 cores and 2 NUMA domains per node. One conceptual difference between the Interlagos nodes on the XE6 and the Haswell nodes on the XC40 is the Hyperthreading feature of the Haswell processor. Hyperthreading is always booted and whether it is used or not is controlled via the -j option to aprun. Using -j 2 enables Hyperthreding while -j 1 (the default) does not. With Hyperthreading enabled, the compute node on the XC40 disposes 48 cores instead of 24.

- Description: Serial application (no MPI or OpemMP)

<number_of_nodes>: 1 <aprun_command>: aprun -n 1 <exe>

- Description: Pure OpenMP application (no MPI)

<number_of_nodes>: 1 <nt>: 24 <aprun_command>: aprun -n 1 -d $OMP_NUM_THREADS <exe>

Comment: You can vary the number of threads from 1-24.

- Description: Pure MPI application on two nodes fully packed (no OpenMP) with Hyperthreads

<number_of_nodes>: 2 <aprun_command>: aprun -n 96 -N 48 -j 2 <exe>

- Description: Pure MPI application on two nodes fully packed (no OpenMP) without Hyperthreads

<number_of_nodes>: 2 <aprun_command>: aprun -n 48 -N 24 -j 1 <exe>

Comment: Here you can also omit the -j 1 option as it is the default. This configuration corresponds to the wide-AVX case on the XE6 nodes.

- Description: Mixed (Hybrid) MPI OpenMP application on two nodes with Hyperthreading.

<number_of_nodes>: 2 <nt>: 2 <aprun_command>: aprun -n 48 -N 24 -d $OMP_NUM_THREADS -j 2 <exe>

General remarks

The aprun allows to start an application with more OpenMP threads than compute cores available. This oversubscription results in a substantial performance degradation. The same happens if the -d value is smaller than the number of OpenMP threads used by the application. Furthermore, for the Intel programming environment an additional helper thread per processing element is spawned which can lead to an oversubscription. Here, one can use the -cc numa_node or the -cc none option to aprun to avoid this oversubscription of hardware. The default behavior, i.e. if no -cc is specified, is as if -cc cpu is used which means that each processing element and thread is pinned to a processor. Please consult the aprun man page. Another popular option to aprun is -ss which forces memory allocation to be constrained in the same node as the processing element or thread is constrained. One can use the xthi.c utility to check the affinity of threads and processing elements.

The qsub arguments specially available for CRAY XE6 systems (mppwidth, mppnppn, mppdepth, feature) is deprecated in this batch system version. Most functionalities of those old CRAY qsub arguments are still available in this batch system version. Nevertheless, we recommend not to use these qsub arguments anymore. Please use this syntax described always in all examples of this document:

qsub -l nodes=2:ppn=24 <myjobscript>

(replaces: qsub -l mppwidth=48,mppnppn=24)

- nodes: replacement for mppwidth/mppnppn

- ppn: replacement for mppnppn

Please note that in the examples above the keywords such as nodes or ppn have been specified directly in the script via the #PBS string. For the example in this warning box the keywords are specified on the command line which is also allowed. But you cannot specify the keywords both in

the srcript and the command line.

Special Jobs / Special Nodes

Pre- and Postprocessing/Visualization nodes with large memory

11 visualisation nodes are integrated into the external nodes of Hazelhen.

- 3 nodes are equipped with 512 GB of main memory. Access to a single node is possible by using the node feature mem512gb.

- 3 nodes are equipped with 128 GB of main memory. Access to a single node is possible by using the node feature mem128gb.

- 5 nodes are equipped with 256 GB of main memory. Access to a single node is possible by using the node feature mem256gb.

user@eslogin00X> qsub -I -lnodes=1:mem512gb

Two multi user smp nodes are equipped with 1.5 TB of memory and a 3rd node is equipped with 1TB of memory. Access to these nodes is possible by using the node feature smp and the smp queue. Access is scheduled by the amount of required memory which has to be specified by the vmem feature.

user@eslogin00X> qsub -I -lnodes=1:smp:ppn=1 -q smp -lvmem=5gb

Test jobs

A special queue test is available for small and short test jobs with very high priority. Limits are:

- 1 job per user

- 25 minutes walltime

- 384 nodes per job

- only 400 nodes in total

user@eslogin00X> qsub -lnodes=16:ppn=24 -q test mybatchjobscript

CCM jobs

The Cluster Compatibility Mode (CCM) is a software solution that provides the services needed to run most cluster-based independent software vendor (ISV) applications out-of-the-box with some configuration adjustments.

Note: CCM is only available for some users, not by default! If you need this feature, please ask your project manager for access to CCM.

separated output

If you need to separate the output of you job you can write a separate file for each rank using a wrapper shell script:

export PMI_NO_FORK=1 aprun -n24 -N24 bash -c "<exe> >& log.\$ALPS_APP_PE"

OR

use the ALPS_STD..._SPEC variable:

export PMI_NO_FORK=1 export ALPS_STDOUTERR_SPEC=<Outputdir> aprun ...

Where you will than get the following files in your output directory:

oe00000, oe00001, .... oe00099

It is also possible to separate stdin and stdout :

ALPS_STDOUT_SPEC=<output dir> -> Files with extension 'o'. ALPS_STDERR_SPEC=<output dir> -> Files with extension 'e'.