- Infos im HLRS Wiki sind nicht rechtsverbindlich und ohne Gewähr -

- Information contained in the HLRS Wiki is not legally binding and HLRS is not responsible for any damages that might result from its use -

CRAY XC40 Hardware and Architecture: Difference between revisions

From HLRS Platforms

Jump to navigationJump to search

No edit summary |

No edit summary |

||

| Line 44: | Line 44: | ||

| <BR> | | <BR> | ||

5.4PB | 5.4PB | ||

|- | |||

|Cray Linux Environment (CLE) | |||

* Compute Node Linux | |||

* Cluster Compatibility Mode (CCM) | |||

* Data Virtualization Services (DVS) | |||

| Yes | |||

|- | |||

| PGI Compiling Suite (FORTRAN, C, C++) including Accelerator | |||

| 25 user (shared with Step 1) | |||

|- | |||

|Cray Developer Toolkit | |||

* Cray Message Passing Toolkit (MPI, SHMEM, PMI, DMAPP, Global Arrays) | |||

* PAPI | |||

* GNU compiler and libraries | |||

* JAVA | |||

* Environment setup (Modules) | |||

* Cray Debugging Support Tools | |||

** Lgdb | |||

** STAT | |||

** ATP | |||

| Unlimited Users | |||

|- | |||

| Cray Programming Environment | |||

* Cray Compiling Environment (FORTRAN, C, C++) | |||

* Cray Performance Monitoring and Analysis | |||

** Cray PAT | |||

** Cray Apprentice2 | |||

* Cray Math and Scientific Libraries | |||

** Cray Optimized BLAS | |||

** Cray Optimized LAPACK | |||

** Cray Optimized ScaLAPACK | |||

** IRT (Iterative Refinement Toolkit) | |||

| Unlimited Users | |||

|- | |||

| Alinea DDT Debugger | |||

| 2048 Processes (shared with Step 1) | |||

|- | |||

| Lustre Parallel Filesystem | |||

| Licensed on all Sockets | |||

|- | |||

| Intel Composer XE | |||

* Intel C++ Compiler XE | |||

* Intel Fortran Compiler XE | |||

* Intel Parallel Debugger Extension | |||

* Intel Integrated Performance Primitives | |||

* Intel Cilk Plus | |||

* Intel Parallel Building Blocks | |||

* Intel Threading Building Blocks | |||

* Intel Math Kernel Library | |||

| 10 Seats | |||

|- | |- | ||

|- | |- | ||

Revision as of 15:43, 10 November 2014

Installation step 2 (Hornet production system)

Summary Hornet Production system (Phase 1 Step 2)

| Cray Cascade XC40 Supercomputer | Step 2 |

|---|---|

Performance

|

3.79 Pflops |

| Cray Cascade Cabinets | 21 |

| Number of Compute Nodes | 3944 (dual socket) |

Compute Processors

|

3944*2= 7888 Intel Haswell E5-2680v3 2,5 GHz, 12 Cores, 2 HT/Core |

Compute Memory on Scalar Processors

|

DDR4 |

| Interconnect | Cray Aries |

User Storage

|

5.4PB |

Cray Linux Environment (CLE)

|

Yes |

| PGI Compiling Suite (FORTRAN, C, C++) including Accelerator | 25 user (shared with Step 1) |

Cray Developer Toolkit

|

Unlimited Users |

Cray Programming Environment

|

Unlimited Users |

| Alinea DDT Debugger | 2048 Processes (shared with Step 1) |

| Lustre Parallel Filesystem | Licensed on all Sockets |

Intel Composer XE

|

10 Seats |

For detailed information see XC40-Intro

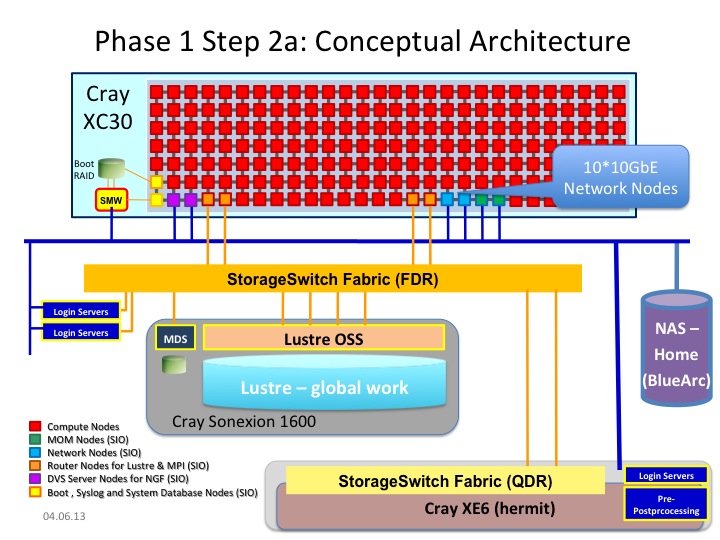

Installation Step 2a (Hornet test system)

Summary Hornet Test system (Phase 1 Step 2a)

| Cray Cascade XC30 Supercomputer | Step 2a |

|---|---|

| Cray Cascade Cabinets | 1 |

| Number of Compute Nodes | 164 |

Number of Compute Processors

|

328 Intel SandyBridge 2,6 GHz, 8 Cores |

Compute Memory on Scalar Processors

|

DDR3 1600 MHz |

| I/O Nodes | 14 |

| Interconnect | Cray Aries |

| External Login Servers | 2 |

| Pre- and Post-Processing Servers | - |

User Storage

|

(330TB) |

Cray Linux Environment (CLE)

|

Yes |

| PGI Compiling Suite (FORTRAN, C, C++) including Accelerator | 25 user (shared with Step 1) |

Cray Developer Toolkit

|

Unlimited Users |

Cray Programming Environment

|

Unlimited Users |

| Alinea DDT Debugger | 2048 Processes (shared with Step 1) |

| Lustre Parallel Filesystem | Licensed on all Sockets |

Intel Composer XE

|

10 Seats |

Architecture

- System Management Workstation (SMW)

- system administrator's console for managing a Cray system like monitoring, installing/upgrading software, controls the hardware, starting and stopping the XC40 system.

- service nodes are classified in:

- login nodes for users to access the system

- boot nodes which provides the OS for all other nodes, licenses,...

- network nodes which provides e.g. external network connections for the compute nodes

- Cray Data Virtualization Service (DVS): is an I/O forwarding service that can parallelize the I/O transactions of an underlying POSIX-compliant file system.

- sdb node for services like ALPS, torque, moab, slurm, cray management services,...

- I/O nodes for e.g. lustre

- MOM nodes for placing user jobs of the batch system in to execution

- compute nodes

- are only available for user using the batch system and the Application Level Placement Scheduler (ALPS), see running applications.

- The compute nodes are installed with 64 GB memory, each with fast interconnect (CRAY Aries).

- Details about the interconnect of the Cray XC series network

- are only available for user using the batch system and the Application Level Placement Scheduler (ALPS), see running applications.

- in future, the StorageSwitch Fabric of step2a and step1 will be connected. So, the Lustre workspace filesystems can be used on both hardware (Login servers and preprocessing servers) of step1 and step2a.